Future AGI Docs: AI Testing, Guardrails, and Monitoring

The complete platform to test, guard, and monitor AI agents. Build self-improving agents that ship smarter with every version.

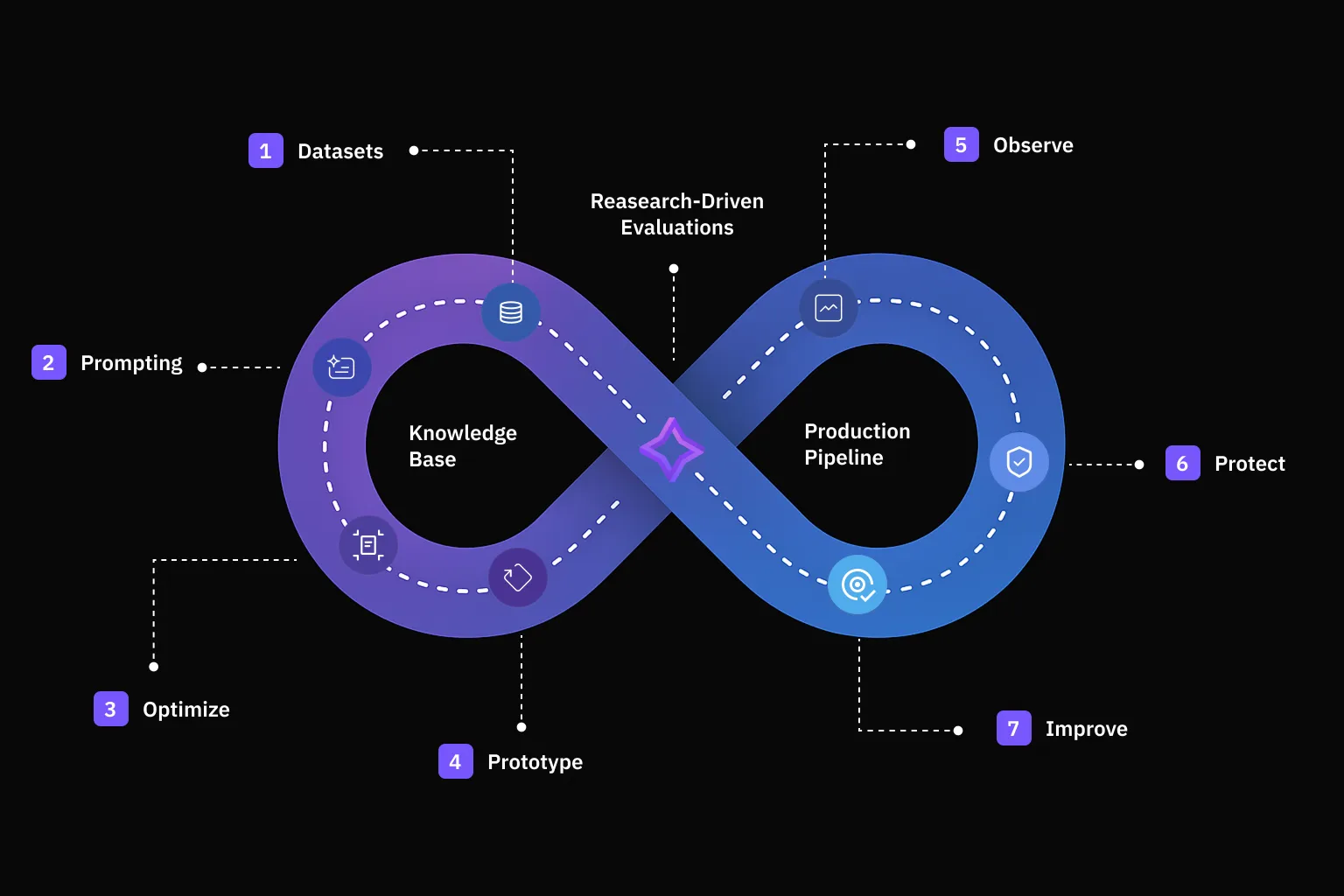

The platform covers the full agent lifecycle across three stages: simulate and iterate on your agent before deployment, evaluate outputs and catch issues with 70+ metrics, then optimize and observe performance in production. All stages feed into each other: production traces inform evaluations, evaluations surface issues, and issues drive the next iteration.

Future AGI integrates with OpenAI, Anthropic, LangChain, LlamaIndex, CrewAI, Vercel AI SDK, and 30+ more frameworks. You can start with a single line of code.

Simulate & Iterate

Go from idea to production-ready agent faster. Simulate thousands of scenarios, iterate with the Agent IDE, and run structured experiments.

- Simulation: Run thousands of multi-turn conversations with synthetic users, personas, and branching scenarios. Test voice agents and chat agents before they reach real users.

- Prototype: Build AI application variants in the Agent IDE. Compare models, prompts, and configurations side by side with built-in evaluation.

- Dataset: Create golden datasets manually, import from files, or generate synthetic data. Use them across evaluations, simulations, and experiments.

- Prompt: Version prompts, deploy to environments via labels, and track how changes affect quality across traces.

- Knowledge Base: Upload documents that ground evaluations, power RAG testing, and provide context for synthetic data generation.

Simulation

Scenarios, personas, synthetic users

Prototype

Agent IDE, experiments, comparison

Dataset

Golden datasets, import, synthetic generation

Prompt

Versioning, labels, environments

Knowledge Base

Document upload, RAG grounding

Evaluate

Catch issues early. Run comprehensive evaluations across datasets, detect hallucinations, and protect your agents with real-time guardrails.

- Error Feed: Sentry-style error tracking for AI agents. Errors are automatically surfaced, grouped, and triaged. See exactly where and why your agent failed, which traces are affected, and how many users were impacted.

- Evaluation: 70+ built-in metrics covering quality, safety, hallucination, faithfulness, toxicity, PII detection, and more. Create custom evals for domain-specific checks. Run on datasets in development or on production traces continuously.

- Protect: Real-time guardrails that intercept requests and responses before they reach users. Block hallucinations, PII leaks, and policy violations in production.

Error Feed

Error tracking, triage, root cause

Evaluation

70+ metrics, custom evals, CI/CD

Protect

Real-time guardrails, PII blocking

Optimize & Observe

Use production data to continuously improve your agents. Track performance in real-time, trace requests end-to-end, and get alerted before users complain.

- Optimization: Apply reinforcement learning from human feedback to automatically improve agent responses. The optimizer uses evaluation scores as reward signals to refine prompts without manual tuning.

- Observability: End-to-end tracing for every LLM call, retrieval, and tool invocation. Track costs by model, monitor latency percentiles, replay user sessions, and set up alerts for anomalies. Based on OpenTelemetry.

- Agent Command Center: Unified API gateway across 25+ LLM providers. Route requests with fallbacks, cache responses, enforce rate limits and budgets, and run shadow experiments. Drop-in replacement for the OpenAI SDK.

- Falcon AI: AI copilot with 300+ tools built into the dashboard. Analyze evaluation results, debug traces, create datasets, and chain multi-step workflows through natural language.

- Annotations: Human-in-the-loop labeling with annotation queues, custom scoring labels, and inter-annotator agreement tracking. Feed human judgments back into evaluations and optimization.

Optimization

RL from human feedback, auto-tuning

Observability

Tracing, costs, latency, alerts

Agent Command Center

Routing, caching, rate limits, 25+ providers

Falcon AI

AI copilot, 300+ tools, natural language

Annotations

Labeling queues, scoring, agreement

Where to start

Setting up tracing, evaluation, and simulation can be done independently. Pick the path that matches where you are.

| Starting point | You want to… | Start here |

|---|---|---|

| New to Future AGI | Get a quick overview and make your first call | Quickstart |

| Building an agent | Test with simulated users before deploying | Simulation |

| Already in production | See what’s happening with your LLM calls | Observability |

| Evaluating quality | Run evals on outputs and catch regressions | Evaluation |

| Managing multiple LLM providers | Unify routing, caching, and cost controls behind one API | Agent Command Center |

Questions & Discussion