Future AGI Observe: Monitor LLM Apps in Production

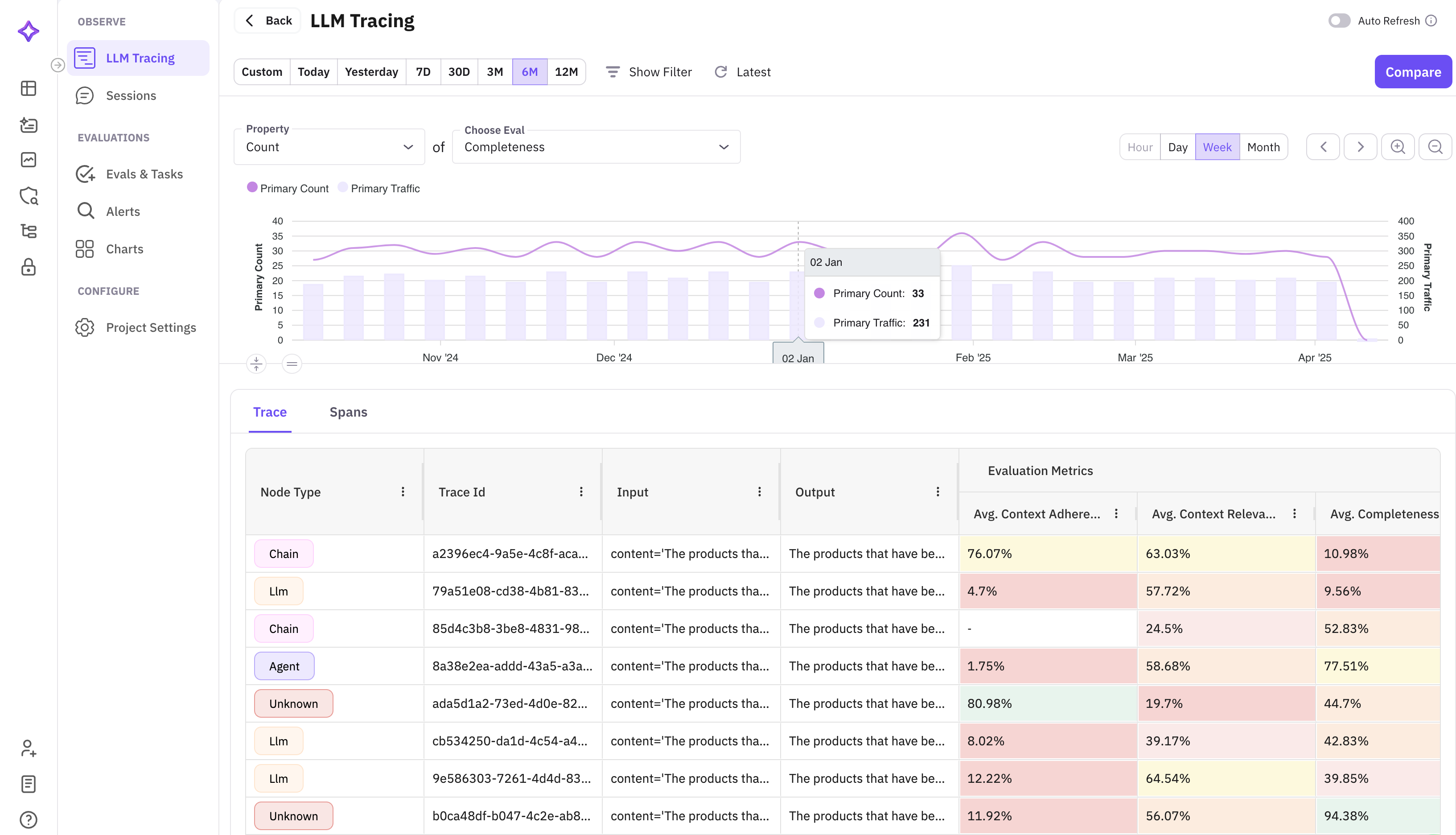

Monitor and evaluate LLM applications in production with real-time tracing, session analysis, cost tracking, and alerting.

About

Observability is how you monitor your AI application after it goes live. Once your app is in production, things change: user inputs vary, model behavior shifts, and issues come up that testing never caught. Observability gives you a continuous view of how your application is performing so you can stay on top of it.

It tracks every response your application generates, groups them by session and user, scores them for quality, and alerts you when something goes wrong. Instead of finding out about problems from users, you see them in the dashboard first.

How Observability Connects to Other Features

- Prototype: After you promote a winning version in Prototype, its traces continue flowing into Observe so you can monitor production performance against the same quality criteria. Learn more

- Evaluation: Observability uses the same built-in eval templates to score production traces automatically. Any eval you configured in Prototype or Datasets runs the same way here. Learn more

- Alerts: Observability feeds into the alerting system so you are notified when quality, cost, or latency crosses a threshold in production. Learn more

Getting Started with Observability

Set Up Observability

Connect the SDK and start capturing traces in minutes.

Evals

Run evaluations on observed traces and sessions.

Sessions

Group and analyze multi-turn interactions.

Users

Track and analyze activity by user.

Alerts & Monitors

Configure alerts for real-time issue detection.

Voice Observability

Monitor voice agent interactions and call quality.

Questions & Discussion