Future AGI Error Feed: AI Agent Trace Error Detection

Automatically detect, cluster, score, and triage errors in your AI agent traces, without any configuration beyond standard tracing.

About

Error Feed is Future AGI’s error monitoring for AI agents. As soon as traces hit an Observe project, it picks them up, finds failure patterns, groups similar ones together, and writes up the analysis. No extra setup.

Think Sentry, but for the ways agents actually fail: hallucinated outputs, tool misuse, broken workflows, safety violations, and reasoning gaps that traditional error monitoring won’t catch.

What it does

Error Feed runs in the background on every Observe project. For each trace it analyzes, it:

- Detects errors in five categories, from factual grounding failures to tool crashes to safety violations. See the full error taxonomy.

- Groups related traces into named clusters, so 50 traces with the same underlying problem show up as one issue instead of 50 alerts.

- Scores the trace on four quality dimensions, each on a 0–5 scale. See Scoring.

- Generates analysis: what went wrong, root causes, supporting evidence from the trace, plus a quick fix and a long-term recommendation.

- Tracks trends: whether an issue is happening more often, less often, or staying steady.

Note

No configuration needed. Error Feed turns on automatically for any Observe project the moment traces start arriving.

Who it’s for

Useful whether you’re debugging an agent that just started misbehaving, doing a quality review, or trying to spot systemic problems across thousands of production traces.

You don’t need to know how transformers work to use it. The UI explains what went wrong in plain language. If you do want to dig into trace-level evidence, every finding links straight to the spans involved.

Supported integrations

Error Feed works with any integration that sends traces to a Future AGI Observe project.

LLM providers: OpenAI, OpenAI Agents SDK, Vertex AI (Gemini), AWS Bedrock, Mistral AI, Anthropic, Groq, Together AI, Google ADK, Google GenAI, Portkey

Orchestration frameworks: LlamaIndex, LlamaIndex Workflows, LangChain, LangGraph, LiteLLM, CrewAI, Haystack, Autogen, PromptFlow, Vercel, Pipecat

Other: DSPy, Guardrails AI, Hugging Face smolagents, Ollama, Instructor, MCP

Navigate the docs

How it works

The mental model: how traces become issues, clusters, and scored findings.

Error taxonomy

The five error categories and every subcategory Error Feed can detect.

Scoring

The four quality metrics, what they measure, and how to read scores.

Severity and status

Severity tiers and the triage status workflow — from new issue to resolved.

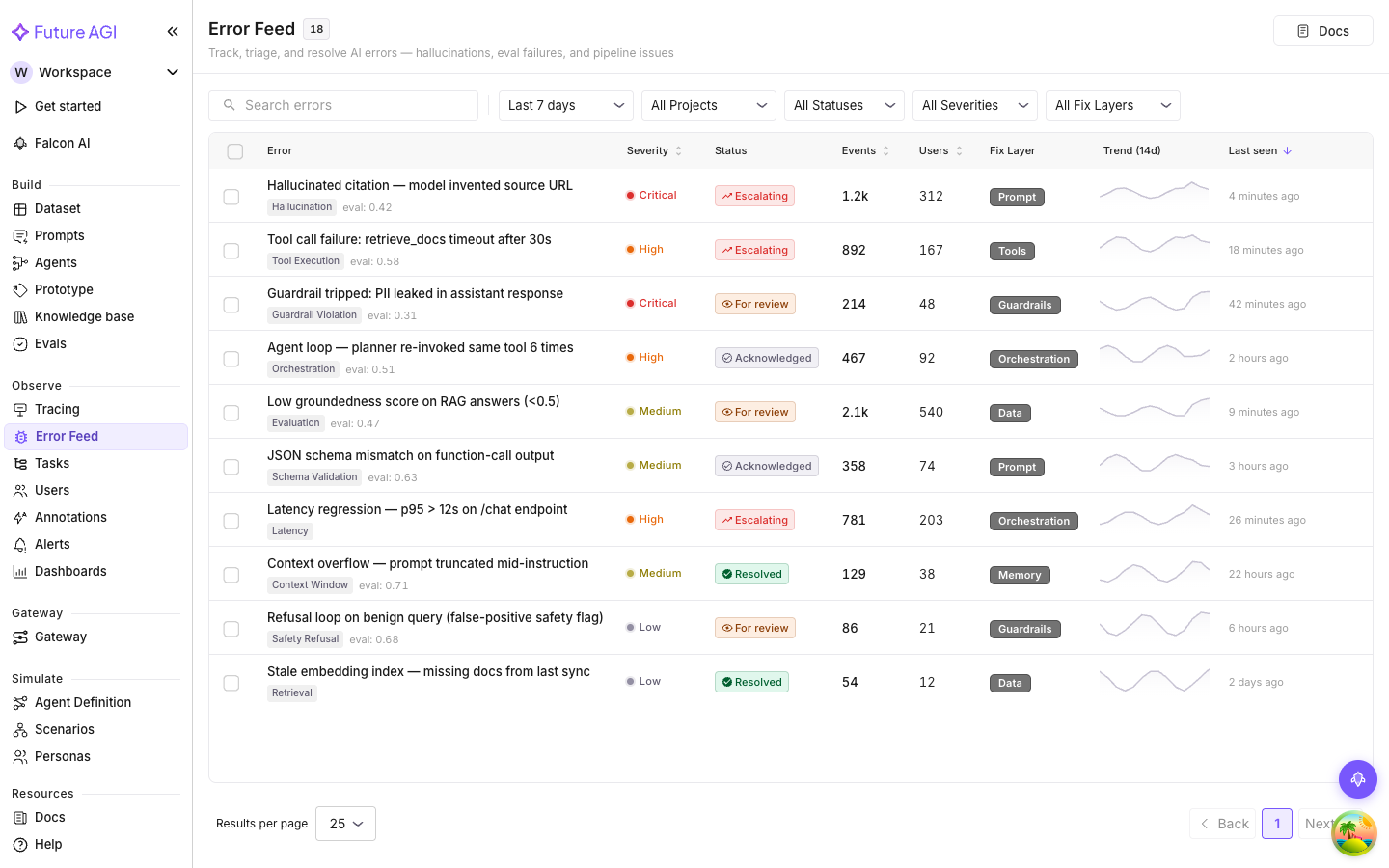

The Feed

Filters, stats bar, columns, sparklines — the issue list page.

Issue Overview

The Overview tab: description, root cause, evidence, and recommendations.

Deep Analysis

On-demand deeper analysis for issues that need more investigation.

Triage workflow

Resolve, acknowledge, assign — how to move issues through your process.

Questions & Discussion