Annotation Queues via Python SDK

Create queues, manage labels, add items, submit annotations, track progress, and export results programmatically with the Future AGI Python SDK.

Annotation queues let you organize traces, sessions, datasets, and simulation outputs for structured human review. Using the SDK, you can:

- Create and configure annotation queues programmatically

- Create and manage annotation labels (categorical, text, numeric, star, thumbs up/down)

- Add items from multiple sources (traces, spans, sessions, dataset rows, simulations, prototype runs)

- Submit or import annotations in bulk

- Track progress and inter-annotator agreement

- Export annotated data to datasets

Note

All methods that accept queue_id also accept queue_name as an alternative. Similarly, methods that accept label_id also accept label_name. The SDK resolves names to IDs automatically.

Installation

pip install futureagiAuthentication

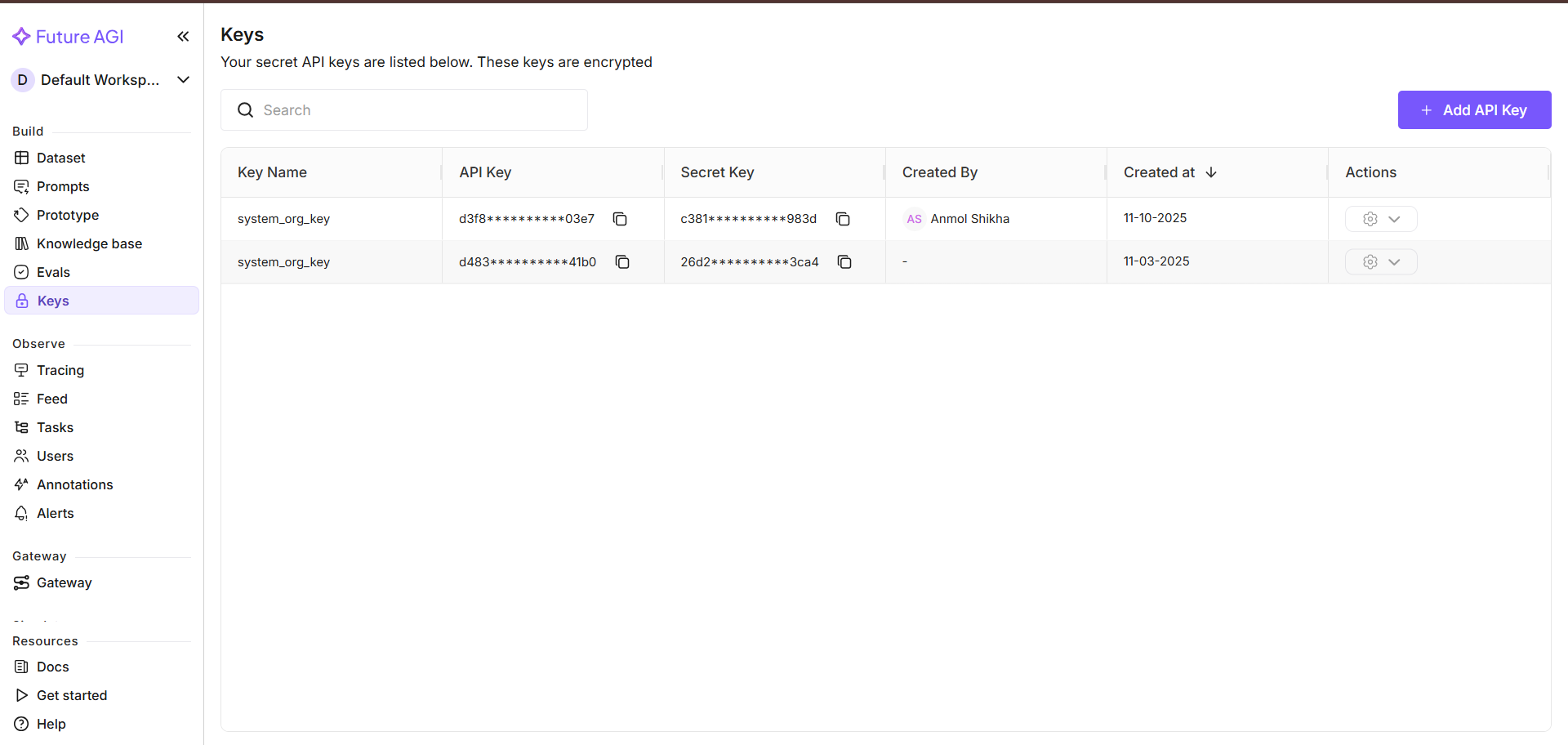

You can find your API key and secret key under Build > Keys in the sidebar.

from fi.queues import AnnotationQueue

client = AnnotationQueue(

fi_api_key="YOUR_API_KEY",

fi_secret_key="YOUR_SECRET_KEY",

)Creating Labels

Create annotation labels to define what annotators should evaluate. Each label has a type that determines the kind of input annotators provide:

# Categorical label (multiple choice)

sentiment_label = client.create_label(

name="Sentiment",

type="categorical",

settings={

"rule_prompt": "Classify the sentiment of the response",

"multi_choice": False,

"options": [

{"label": "Positive"},

{"label": "Negative"},

{"label": "Neutral"},

],

"auto_annotate": False,

"strategy": None,

},

)

# Numeric label (slider or buttons)

quality_label = client.create_label(

name="Quality Score",

type="numeric",

settings={

"min": 1,

"max": 10,

"step_size": 1,

"display_type": "slider",

},

)

# Thumbs up/down label

thumbs_label = client.create_label(

name="Helpful",

type="thumbs_up_down",

settings={},

)You can also list and retrieve existing labels by ID or name:

# List all labels

labels = client.list_labels()

# List labels scoped to a project

project_labels = client.list_labels(project_id="your_project_id")

# Get a specific label by ID or name

label = client.get_label(label_id="your_label_id")

label = client.get_label(label_name="Sentiment")

# Delete a label by ID or name

client.delete_label(label_id="your_label_id")

client.delete_label(label_name="Sentiment")Creating an Annotation Queue

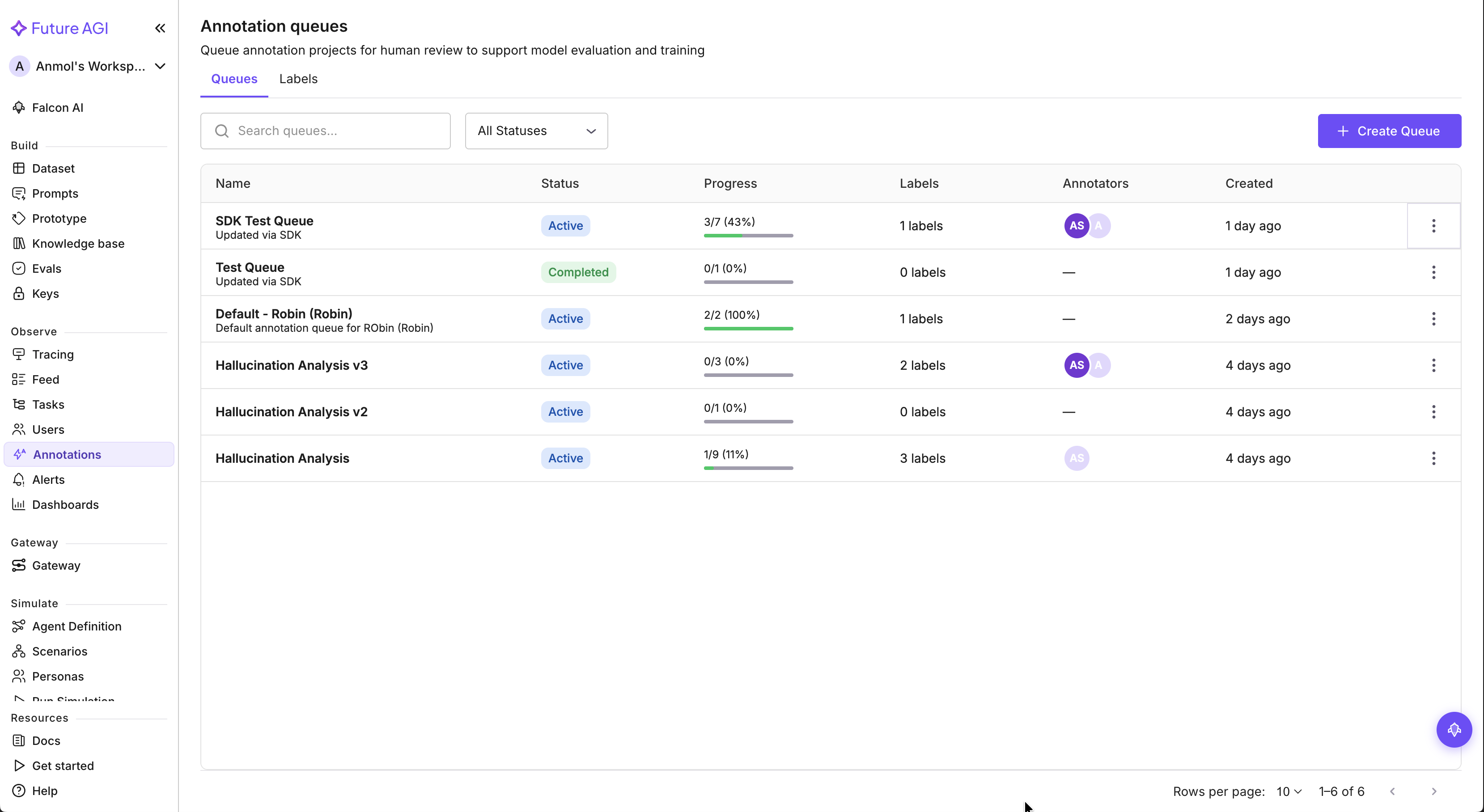

You can find all your annotation queues under Observe > Annotations in the sidebar.

Create a queue with instructions and configuration for your reviewers:

queue = client.create(

name="Sentiment Review",

description="Review and label sentiment of customer interactions",

instructions="Rate each trace as positive, negative, or neutral. Consider the overall tone of the conversation.",

assignment_strategy="load_balanced",

annotations_required=2,

reservation_timeout_minutes=60,

requires_review=True,

)

print(f"Created queue: {queue.id} (status: {queue.status})")Assignment strategies:

"manual"— Explicitly assign items to annotators"round_robin"— Distribute items evenly across annotators"load_balanced"— Assign to the annotator with the fewest pending items

Attaching Labels to a Queue

Once labels are created, attach them to a queue using IDs or names:

# Using IDs (from create_label return values)

client.add_label(queue.id, label_id=sentiment_label.id)

client.add_label(queue.id, label_id=quality_label.id)

# Or using names

client.add_label(queue_name="Sentiment Review", label_name="Sentiment")

client.add_label(queue_name="Sentiment Review", label_name="Quality Score")Activating the Queue

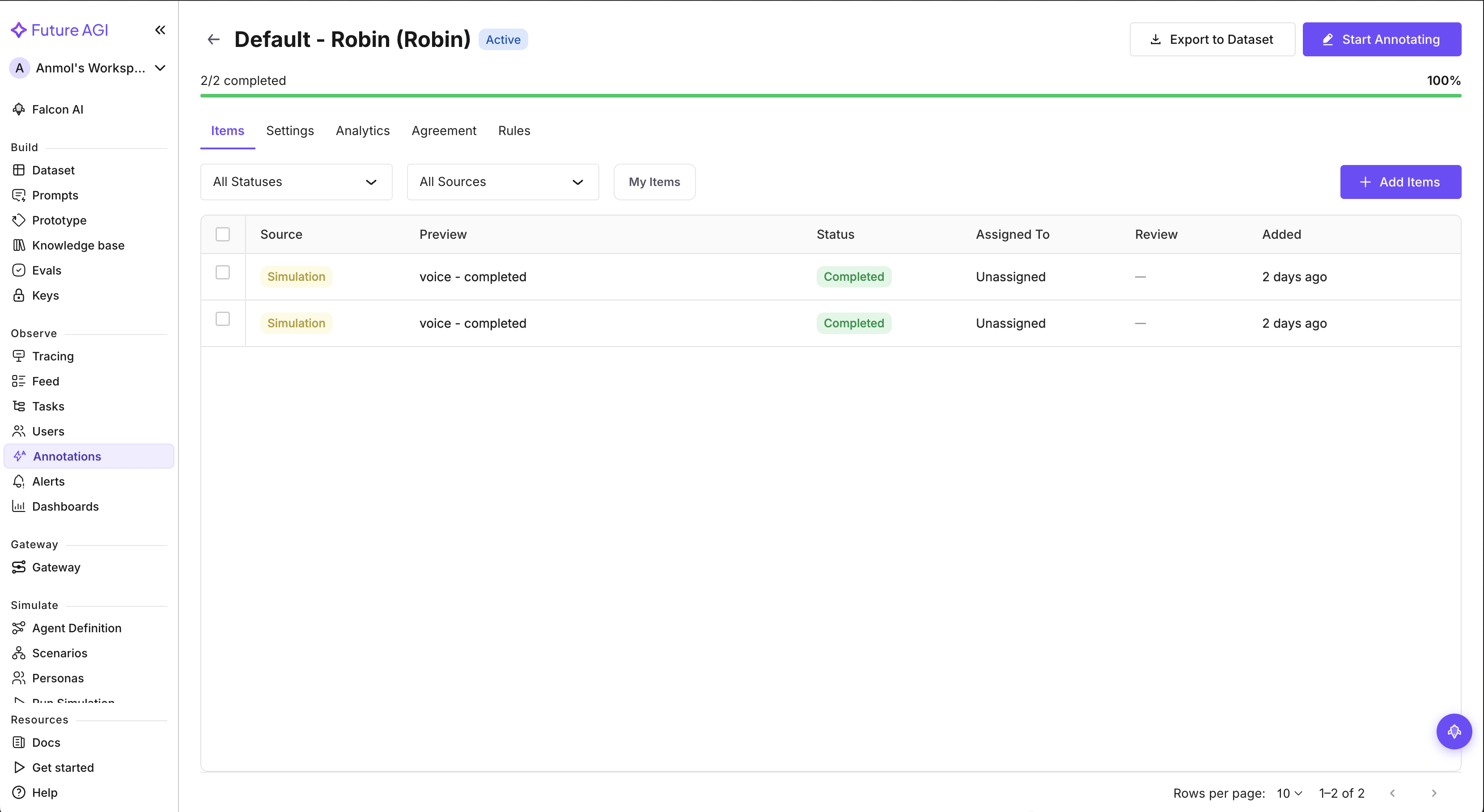

Click on a queue to view its settings, including the queue name, description, instructions, and attached labels.

Queues start in draft status. Activate when ready for annotation:

# Using ID

queue = client.activate(queue.id)

# Or using name

queue = client.activate(queue_name="Sentiment Review")

print(f"Queue status: {queue.status}") # "active"Adding Items

Add items from various sources to the queue:

result = client.add_items(queue.id, items=[

{"source_type": "trace", "source_id": "trace_uuid_1"},

{"source_type": "trace", "source_id": "trace_uuid_2"},

{"source_type": "observation_span", "source_id": "span_uuid_1"},

{"source_type": "dataset_row", "source_id": "row_uuid_1"},

{"source_type": "trace_session", "source_id": "session_uuid_1"},

{"source_type": "call_execution", "source_id": "simulation_uuid_1"},

{"source_type": "prototype_run", "source_id": "prototype_run_uuid_1"},

])

print(f"Added: {result.added}, Duplicates: {result.duplicates}")Supported source types: trace, observation_span, trace_session, call_execution, prototype_run, dataset_row

Listing and Filtering Items

# List all pending items

pending_items = client.list_items(queue.id, status="pending")

# List items assigned to a specific user

assigned_items = client.list_items(queue.id, assigned_to="user_uuid")

# Paginate through items

page_2 = client.list_items(queue.id, page=2, page_size=20)Assigning Items

Manually assign items to annotators:

# Assign items to a user

client.assign_items(

queue.id,

item_ids=[items[0].id, items[1].id],

user_id="annotator_user_id",

)

# Unassign items

client.assign_items(

queue.id,

item_ids=[items[0].id],

user_id=None,

)Submitting Annotations

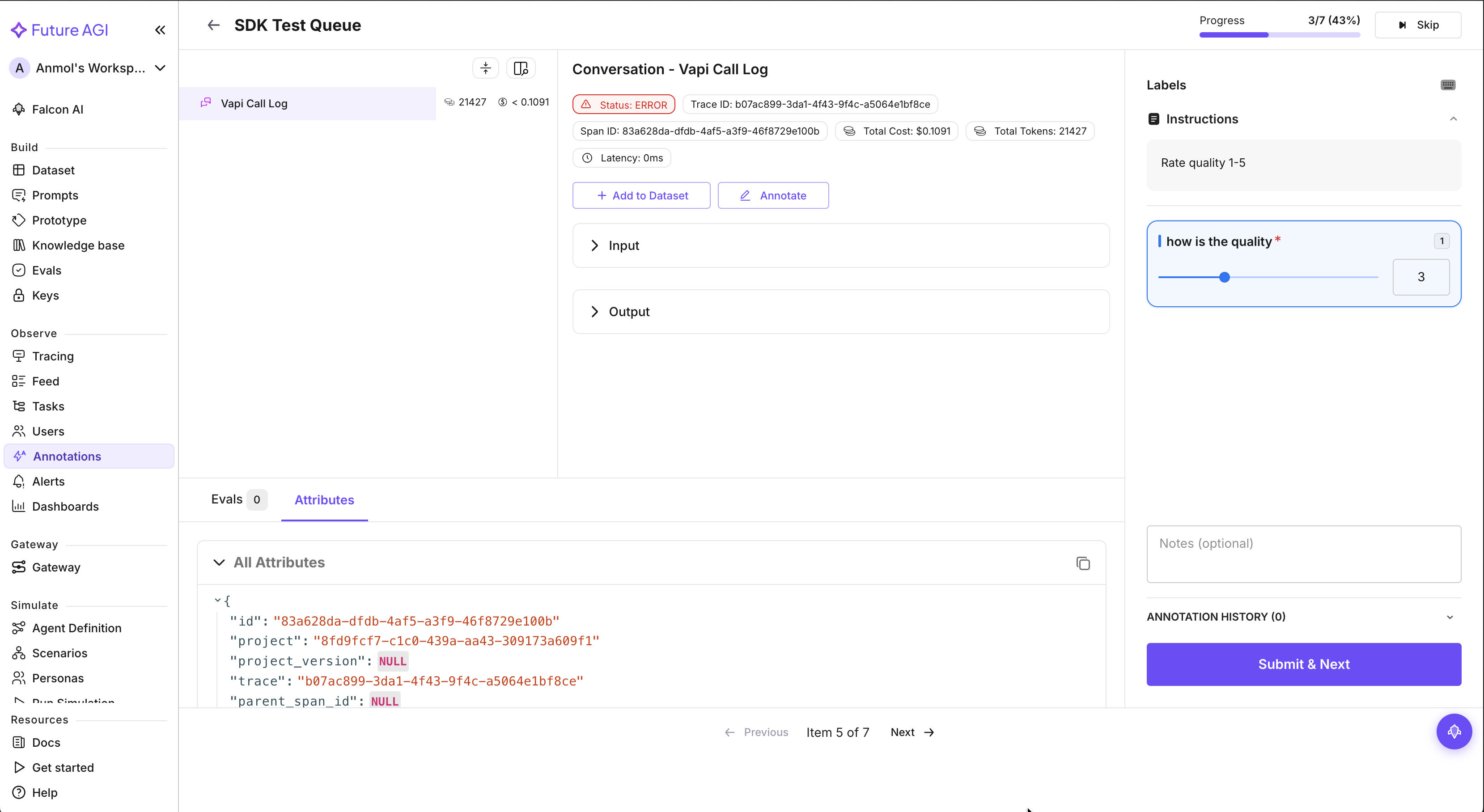

In the UI, annotators see each item’s content alongside the configured labels and can submit their annotations directly.

Submit annotations as the authenticated user:

client.submit_annotations(

queue.id,

item_id=items[0].id,

annotations=[

{"label_id": "sentiment_label_id", "value": "positive"},

{"label_id": "confidence_label_id", "value": 0.95},

],

notes="Clear positive sentiment throughout the conversation",

)Importing Annotations Programmatically

Import annotations from an external source or automated pipeline:

result = client.import_annotations(

queue.id,

item_id=items[0].id,

annotations=[

{"label_id": "sentiment_label_id", "value": "positive"},

{"label_id": "confidence_label_id", "value": 0.92},

],

annotator_id="external_annotator_user_id", # optional

)

print(f"Imported: {result.imported}")Completing and Skipping Items

# Mark item as completed

client.complete_item(queue.id, item_id=items[0].id)

# Skip an item

client.skip_item(queue.id, item_id=items[1].id)Tracking Progress

progress = client.get_progress(queue.id)

print(f"Total: {progress.total}")

print(f"Completed: {progress.completed}")

print(f"Pending: {progress.pending}")

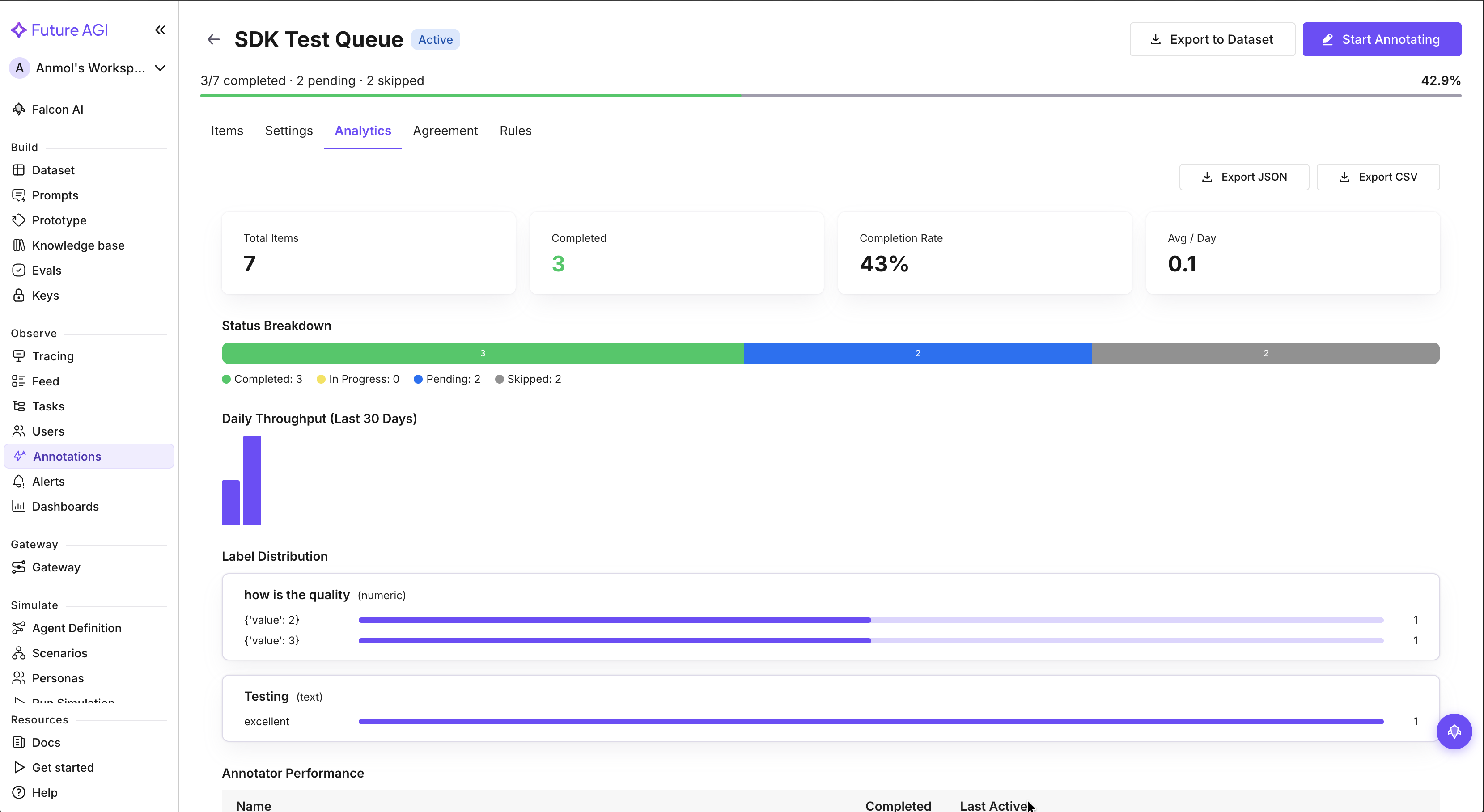

print(f"Progress: {progress.progress_pct}%")Analytics and Agreement

The Analytics tab shows throughput, status breakdown, label distribution, and annotator performance.

# Get throughput and annotator performance

analytics = client.get_analytics(queue.id)

print(f"Status breakdown: {analytics.status_breakdown}")

print(f"Total completed: {analytics.throughput['total_completed']}")

print(f"Avg per day: {analytics.throughput['avg_per_day']}")

# Daily throughput (last 30 days)

for day in analytics.throughput["daily"]:

print(f" {day['date']}: {day['count']} completed")

# Get inter-annotator agreement

agreement = client.get_agreement(queue.id)

print(f"Overall agreement: {agreement.overall_agreement}")Exporting Results

Export as JSON or CSV

# Export completed annotations as JSON

data = client.export(queue.id, export_format="json", status="completed")

# Export as CSV

csv_data = client.export(queue.id, export_format="csv", status="completed")Export to a Dataset

# Create a new dataset from annotations

result = client.export_to_dataset(queue.id, dataset_name="Sentiment Labels")

print(f"Created dataset '{result.dataset_name}' with {result.rows_created} rows")

# Or append to an existing dataset

result = client.export_to_dataset(queue.id, dataset_id="existing_dataset_uuid")Using Scores Without a Queue

You can also annotate any source entity directly using scores, without creating a queue:

# Create a single score (by label ID or name)

score = client.create_score(

source_type="trace",

source_id="trace_uuid_1",

label_name="Quality Score",

value="good",

score_source="api",

notes="Automated quality check",

)

# Create multiple scores at once

client.create_scores(

source_type="trace",

source_id="trace_uuid_1",

scores=[

{"label_id": "quality_label_id", "value": "good"},

{"label_id": "relevance_label_id", "value": 4.5},

],

)

# Retrieve scores

scores = client.get_scores(source_type="trace", source_id="trace_uuid_1")

for s in scores:

print(f"{s.label_name}: {s.value} (by {s.annotator_name})")Completing a Queue

When all items have been reviewed:

queue = client.complete_queue(queue.id)

print(f"Queue status: {queue.status}") # "completed"Warning

Completing a queue does not automatically disable its automation rules. If you have active rules, they may continue adding items, which will re-activate the queue. Disable or delete automation rules manually before completing the queue.

Complete Example

from fi.queues import AnnotationQueue

client = AnnotationQueue(

fi_api_key="YOUR_API_KEY",

fi_secret_key="YOUR_SECRET_KEY",

)

# 1. Create and configure the queue

queue = client.create(

name="Trace Quality Review",

instructions="Rate the quality of each AI response on a scale of 1-5",

assignment_strategy="round_robin",

annotations_required=2,

)

# 2. Create a label, attach it, and activate

label = client.create_label(

name="Quality",

type="numeric",

settings={"min": 1, "max": 5, "step_size": 1, "display_type": "slider"},

)

client.add_label(queue.id, label.id)

queue = client.activate(queue.id)

# 3. Add items (using queue name works too)

result = client.add_items(queue_name="Trace Quality Review", items=[

{"source_type": "trace", "source_id": "trace_1"},

{"source_type": "trace", "source_id": "trace_2"},

{"source_type": "trace", "source_id": "trace_3"},

])

print(f"Added {result.added} items")

# 4. List and annotate items

items = client.list_items(queue.id, status="pending")

for item in items:

client.submit_annotations(

queue.id,

item.id,

annotations=[{"label_id": label.id, "value": 4}],

)

client.complete_item(queue.id, item.id)

# 5. Check progress and export

progress = client.get_progress(queue_name="Trace Quality Review")

print(f"Completed: {progress.completed}/{progress.total}")

export_result = client.export_to_dataset(queue.id, dataset_name="Quality Reviews")

print(f"Exported to dataset: {export_result.dataset_name}")

# 6. Complete the queue

client.complete_queue(queue.id)Questions & Discussion