Create Custom Evaluation Templates in Future AGI

Define custom evaluation criteria and rules tailored to your use case, extending beyond the built-in templates available in Future AGI.

About

Every AI product has its own definition of a good response. Custom evals let you encode those rules and run them at scale, you write the criteria once, in plain language, and Future AGI scores every response against it automatically, returning a result and a reason for each one.

Once created, a custom eval works exactly like any built-in template: use it on a dataset, in a simulation, or call it from the SDK.

When to use

- Domain-specific validation: Assess content against industry or regulatory standards that aren’t in the default templates.

- Business rule compliance: Enforce your organization’s guidelines (tone, format, disclosures) in a repeatable eval.

- Complex or weighted scoring: Implement multi-criteria or custom scoring logic via your rule prompt.

- Custom output formats: Validate specific response structures or formats (e.g. JSON shape, required fields) with a tailored eval.

How to

You can create custom evals from the UI or via the SDK (by calling the REST API from your code). After the template is saved, run it from the UI or from the evaluation SDK using the template name.

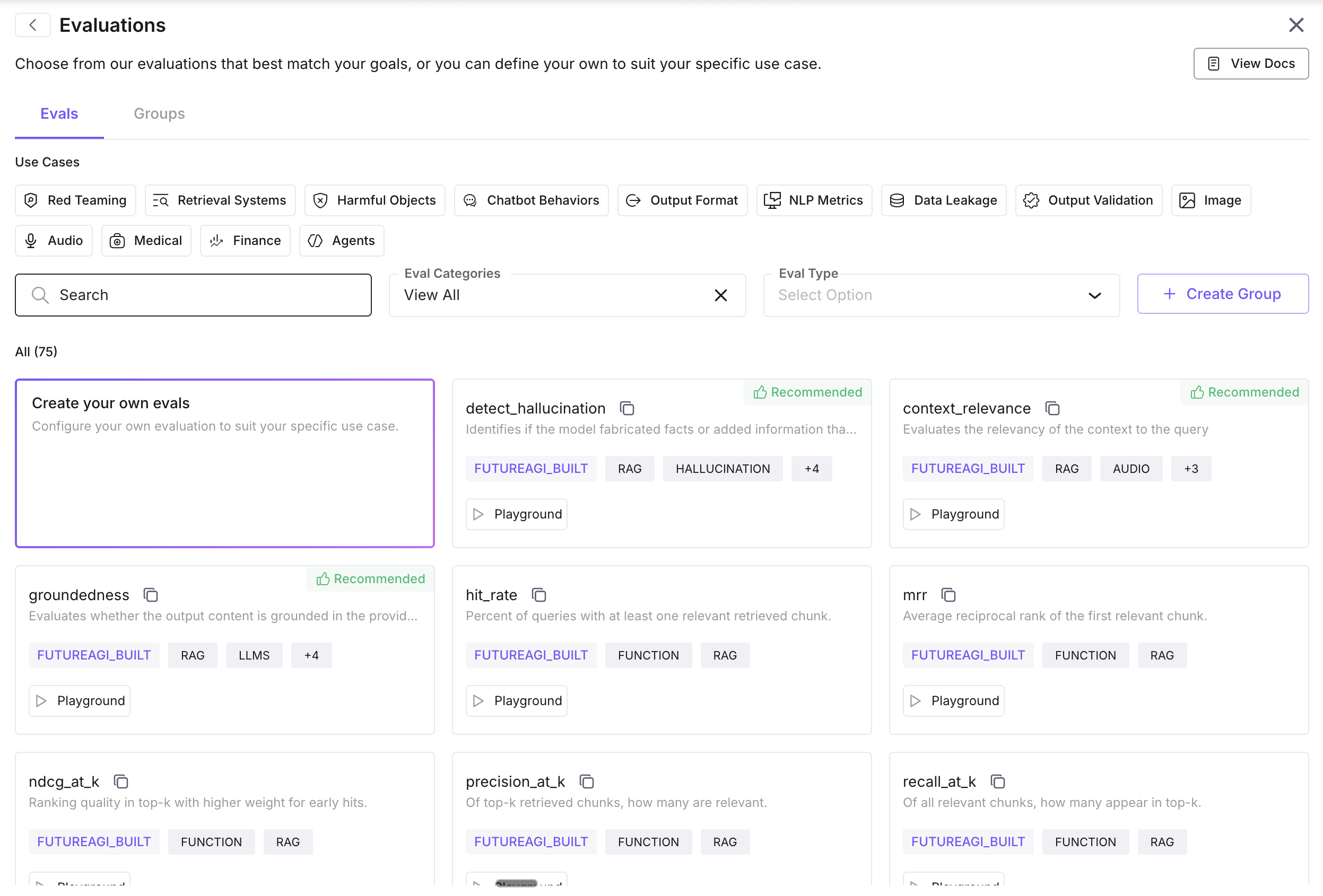

Open evaluation creation

Open your dataset, click Evaluate in the top-right, then Add Evaluation. Select Create your own eval to start the custom-eval flow.

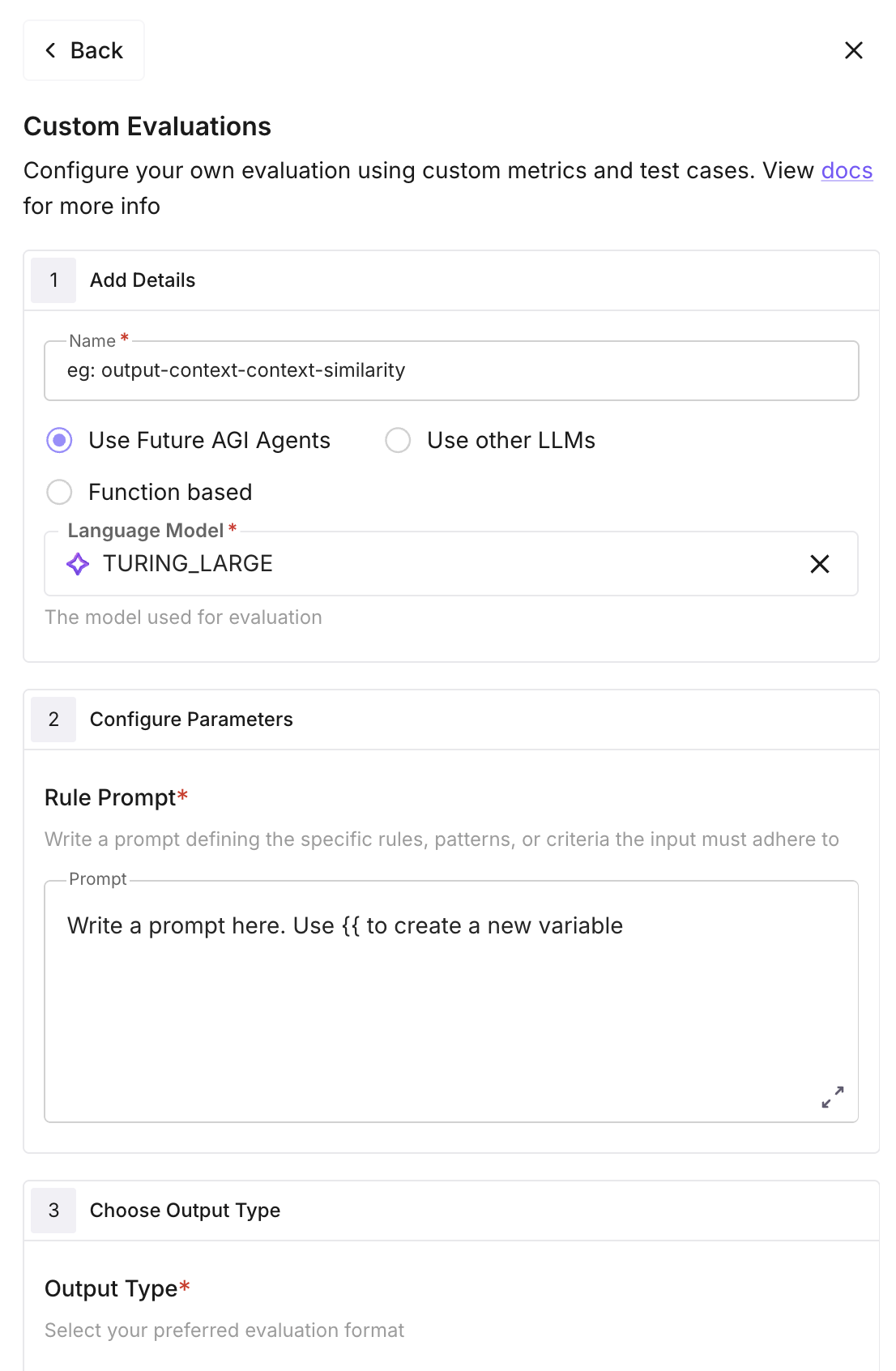

Configure basic settings

Name: unique name for the eval (lowercase letters, numbers, hyphens, and underscores only; cannot start or end with - or _). Used when you add the eval to a dataset or call it from the SDK.

Model: choose Use Future AGI Models (e.g. turing_large, turing_flash, turing_small, protect, protect_flash) or Use other LLMs (your own or external providers). For model details, see Future AGI models and Use custom models.

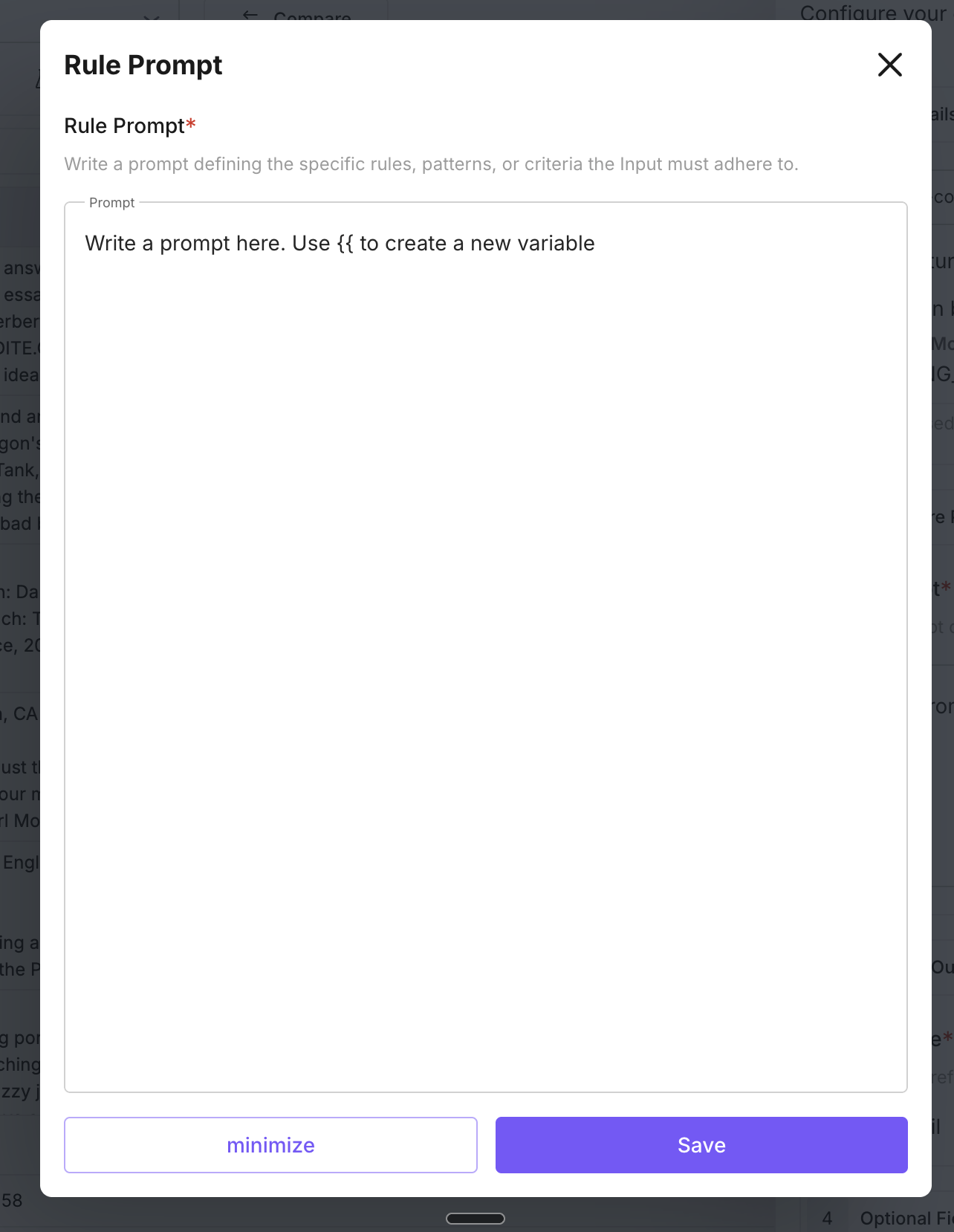

Define evaluation rules

In Rule prompt (criteria), write the instructions the model will follow to evaluate each row. Use {{variable_name}} for placeholders; you’ll map these to dataset columns (or SDK input keys) when you add or run the eval. Be specific about what counts as pass/fail or how to score.

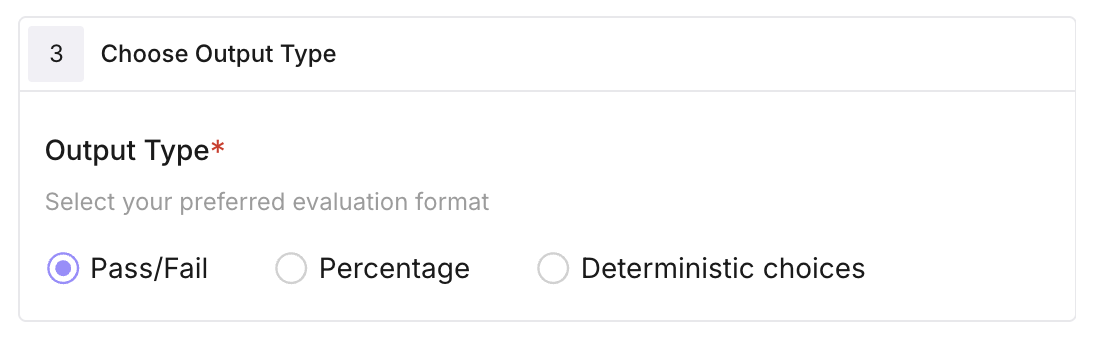

Configure output type

Pass/Fail: binary result (e.g. 1.0 pass, 0.0 fail). Percentage (score): numeric score between 0 and 100. Deterministic choices: categorical result; provide a dict of allowed choices.

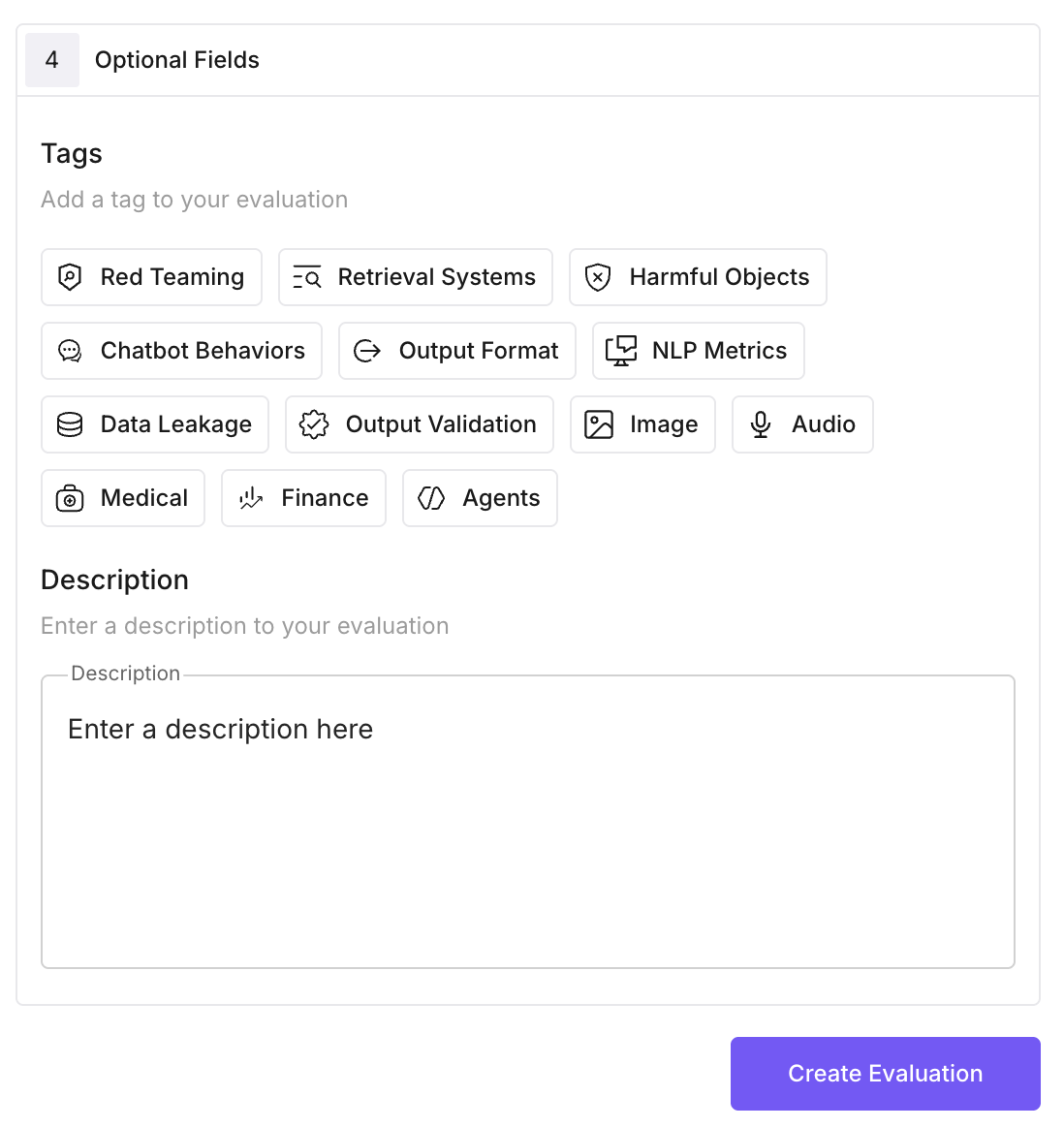

Add optional settings

- Tags: for filtering and organization.

- Description: shown in the evaluation list.

- Check Internet: allow the eval to fetch up-to-date information when needed.

- Required keys: list the input variable names the eval expects (e.g.

input,output,user_query,chatbot_response).

Save the eval

Click Create Evaluation. The new template appears in your evaluation list and can be added to any dataset or called via the SDK using the name you gave it.

Run the evaluation

In your dataset, click Evaluate → Add Evaluation, select the custom eval you created, map the columns to the rule-prompt variables, then click Add & Run. See Running your first eval for the full UI flow.

Creating a custom eval template requires a POST to the Future AGI API. Once created, run it using the Evaluator from the ai-evaluation SDK.

Install the SDK

pip install ai-evaluationCreate the custom eval template using API

Send a POST to /model-hub/create_custom_evals/ using your FI_API_KEY and FI_SECRET_KEY as headers.

import requests

response = requests.post(

"https://api.futureagi.com/model-hub/create_custom_evals/",

headers={

"X-Api-Key": "your-fi-api-key",

"X-Secret-Key": "your-fi-secret-key",

},

json={

"name": "chatbot_politeness_and_relevance",

"description": "Evaluates if the response is polite and relevant.",

"criteria": "Evaluate: 1) Politeness. 2) Relevance to: {{user_query}}. Response: {{chatbot_response}}. Pass only if both.",

"output_type": "Pass/Fail",

"required_keys": ["user_query", "chatbot_response"],

"config": {"model": "turing_small"},

"check_internet": False,

"tags": ["customer-service"],

},

)

print(response.json()) # {"eval_template_id": "..."}Run the custom eval template

Use the template name you registered with Evaluator.evaluate():

from fi.evals import Evaluator

evaluator = Evaluator(

fi_api_key="your-fi-api-key",

fi_secret_key="your-fi-secret-key",

)

result = evaluator.evaluate(

eval_templates="chatbot_politeness_and_relevance",

inputs={

"user_query": "What is the return policy?",

"chatbot_response": "Our return policy allows returns within 30 days.",

},

)

print(result.eval_results[0].output)

print(result.eval_results[0].reason)Next Steps

Evaluate via Platform & SDK

Run evals from the UI or SDK.

Eval groups

Add your custom eval to a group and run it with others.

Use custom models

Bring your own model for evaluations.

Future AGI models

Built-in models available for evals.

CI/CD pipeline

Run evals automatically in your pipeline.

Evaluation overview

How evaluation fits into the platform.

Questions & Discussion