Evaluate via Platform and SDK in Future AGI

Run evaluations using the Future AGI platform UI or the Python SDK. Choose individual templates or batch runs for scalable model assessment.

About

Evaluation is how you measure whether your AI is actually doing what you want it to do.

You give it an input (a prompt, a response, a conversation) and an eval scores it. The score tells you if the output was accurate, safe, on-topic, well-structured, or whatever quality you care about. Every evaluation returns a result (e.g. Passed / Failed, or a numeric score), and a reason explaining why.

You can run evaluations two ways:

- Platform UI: point-and-click on a dataset. No code required.

- Python SDK: call

evaluator.evaluate()from your code, scripts, or CI pipeline.

Both support the same built-in eval templates (e.g. Toxicity, Groundedness, Tone) and any custom evals you’ve defined.

When to use

- Catch regressions before they ship: Run evals in CI so a bad prompt change or model update gets flagged before it reaches production.

- Score outputs at scale: Attach evals to a dataset and every row gets a score automatically, without reviewing each one manually.

- Enforce safety and compliance: Check every response for toxicity, PII, bias, or data privacy issues as part of your standard pipeline.

- Compare models or prompts: Evaluate the same inputs across different models or prompt variations to see which performs better on your criteria.

- Monitor quality over time: Run the same evals repeatedly to track whether your AI’s output quality is improving or degrading.

How to

Choose UI or SDK below; each tab has the process in steps.

Select a dataset

You need a dataset to run evals from the UI. If you don’t have one, add a dataset first. See Dataset overview.

Open the evaluation panel

Open your dataset, then click Evaluate in the top-right. The evaluation configuration panel opens.

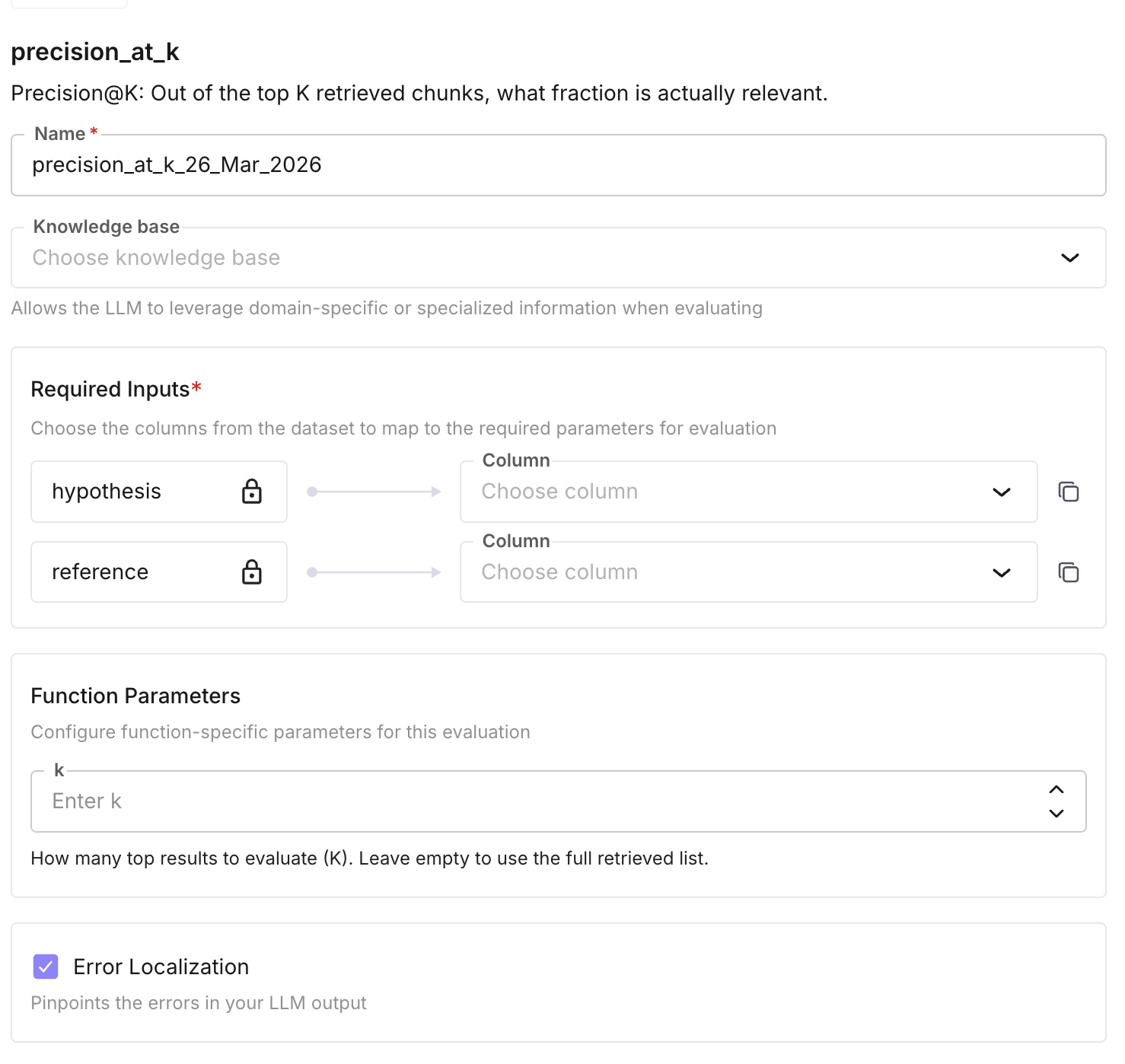

Add an eval

Click Add Evaluation. Choose a built-in template (e.g. Tone) or click Create your own eval. For a built-in template: click it, give it a name, and under config select the dataset column(s) to use as input (and output if required).

Configure and run

Optionally enable Error Localization to pinpoint which part of a row caused a failure. Select a model if the template requires one. Click Add & Run to score every row in the dataset.

Optional: Create your own eval

From the Add Evaluation flow, click Create your own eval to define a custom template (name, model, rule prompt, output type, and optional settings). After you save it, the new eval appears in the evaluation list and you can add it to your dataset as in the step above. For full details on creating and configuring custom evals, see Create custom evals.

Note

Some evals can run without an API key using the standalone evaluate() function, including local metrics like contains, faithfulness, and LLM-as-judge. See the SDK reference for details.

Install and initialise

Install the package ai-evaluation and create an Evaluator with your Future AGI API key and secret. Prefer setting FI_API_KEY and FI_SECRET_KEY in the environment instead of passing them in code. See Accessing API keys.

pip install ai-evaluationfrom fi.evals import Evaluator

evaluator = Evaluator(

fi_api_key="your_api_key",

fi_secret_key="your_secret_key",

)Run a sync eval

Call evaluate with the eval template name (e.g. tone), inputs (dict with the keys the template expects, e.g. "input"), and model_name. Many built-in (system) templates require a model.

result = evaluator.evaluate(

eval_templates="tone",

inputs={

"input": "Dear Sir, I hope this email finds you well. I look forward to any insights or advice you might have whenever you have a free moment"

},

model_name="turing_flash",

)

print(result.eval_results[0].output)

print(result.eval_results[0].reason)Optional: Run async eval

For long-running or large runs, set is_async=True. The call returns immediately with an eval_id; the evaluation runs in the background.

result = evaluator.evaluate(

eval_templates="tone",

inputs={"input": "Your text here"},

model_name="turing_flash",

is_async=True,

)

eval_id = result.eval_results[0].eval_idRetrieve async results

Use get_eval_result(eval_id) to fetch the result when the evaluation has finished. The same method works for both sync and async runs (e.g. to re-fetch a result).

result = evaluator.get_eval_result(eval_id)

print(result.eval_results[0].output)

print(result.eval_results[0].reason)Use a custom template

To use a template you created in the UI, pass its name as eval_templates and supply the inputs dict with the keys your template’s required_keys expect (e.g. "input", "output"). Use the same template name you see in the evaluation list.

result = evaluator.evaluate(

eval_templates="name-of-your-eval",

inputs={

"input": "your_input_text",

"output": "your_output_text"

},

model_name="model_name"

)

print(result.eval_results[0].output)

print(result.eval_results[0].reason)Note

For system (built-in) eval templates, model_name is required and must be one of the models listed for that template. The backend validates required input keys from the template’s config.

Tip

For more eval templates and Future AGI models, see Built-in evals and Future AGI models.

Next Steps

Create custom evals

Define your own eval rules and criteria.

Eval groups

Run multiple evals together as a group.

Use custom models

Bring your own model for evaluations.

Future AGI models

Built-in models available for evals.

CI/CD pipeline

Run evals automatically in your pipeline.

Evaluation overview

How evaluation fits into the platform.

Questions & Discussion