Use Future AGI Models for AI Evaluation and Scoring

Future AGI's proprietary judge models are trained on diverse datasets to perform accurate evaluations and score AI outputs.

About

When you run an evaluation, the model you choose determines how accurately and how fast each response gets scored. Future AGI provides a set of proprietary models built and optimized specifically for evaluation, not general-purpose chat or generation.

Each model is designed for a different need. Some prioritize accuracy across complex multimodal inputs. Others are built for speed, making them suitable for real-time guardrailing or high-volume pipelines. Choosing the right model lets you balance quality and performance for your specific workload.

All models are available in the platform UI and the SDK, and work with both built-in and custom eval templates.

Available models

-

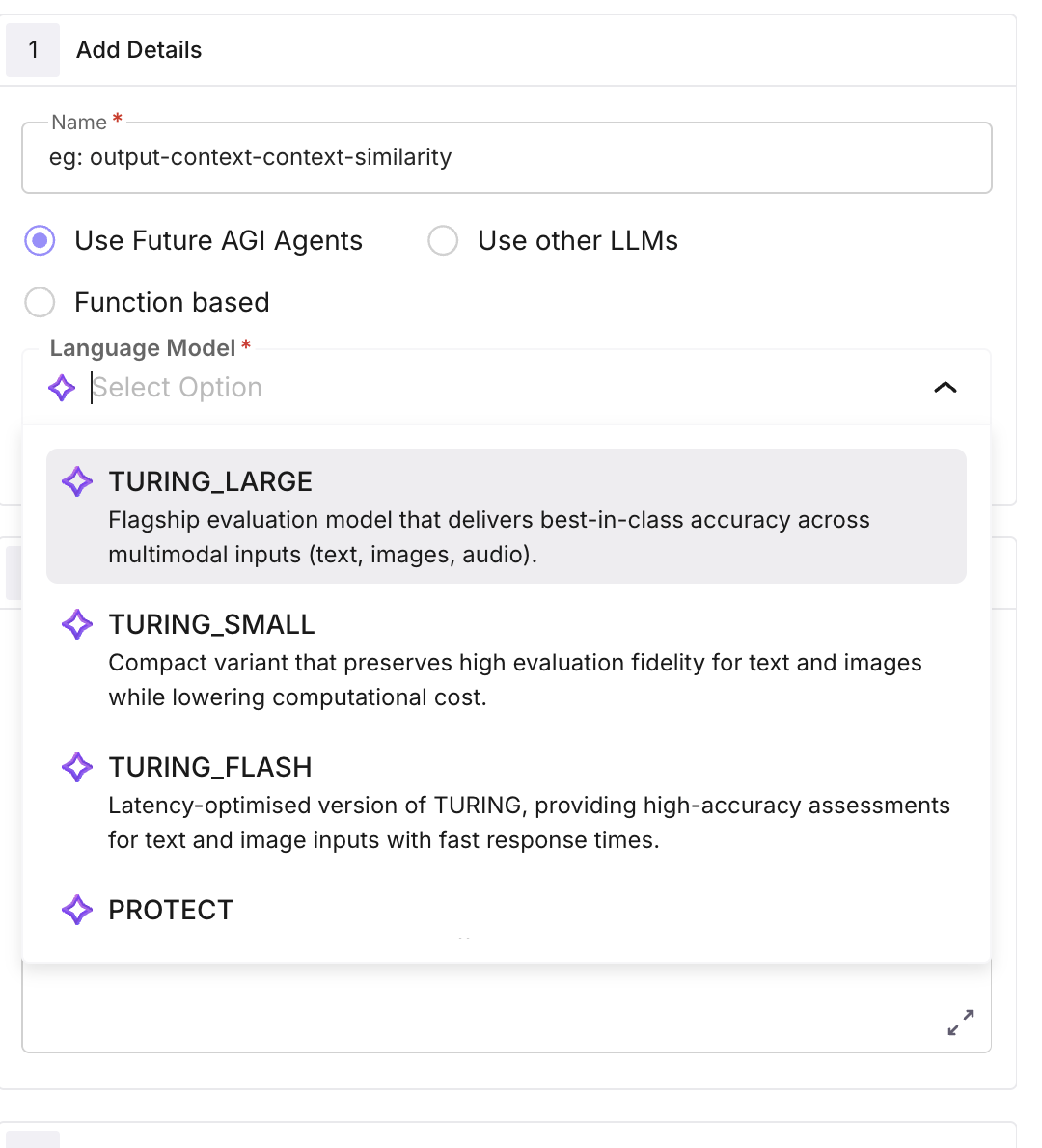

TURING_LARGE

turing_large: Flagship evaluation model that delivers best-in-class accuracy across multimodal inputs (text, images, audio). Recommended when maximal precision outweighs latency constraints. -

TURING_SMALL

turing_small: Compact variant that preserves high evaluation fidelity while lowering computational cost. Supports text and image evaluations. -

TURING_FLASH

turing_flash: Latency-optimised version of TURING, providing high-accuracy assessments for text and image inputs with fast response times. -

PROTECT

protect: Real-time guardrailing model for safety, policy compliance, and content-risk detection. Offers very low latency on text and audio streams and permits user-defined rule sets. -

PROTECT_FLASH

protect_flash: Ultra-fast binary guardrail for text content. Designed for first-pass filtering where millisecond-level turnaround is critical.

Quick comparison

| Model | Code | Inputs | Best for | Latency |

|---|---|---|---|---|

| TURING_LARGE | turing_large | Text, image, audio | Max accuracy, multimodal evals | Higher |

| TURING_SMALL | turing_small | Text, image | High fidelity, lower cost | Medium |

| TURING_FLASH | turing_flash | Text, image | Fast, high-accuracy evals | Low |

| PROTECT | protect | Text, audio | Safety, guardrails, user-defined rules | Low |

| PROTECT_FLASH | protect_flash | Text | First-pass binary filtering | Ultra-low |

How to

Select a model

When adding or configuring an evaluation on a dataset or run test, choose Use Future AGI Models and pick a model from the dropdown.

Pass the model name

Pass model_name in your evaluator.evaluate() call. Use the model code from the table above (e.g. turing_flash, turing_large, protect).

from fi.evals import Evaluator

evaluator = Evaluator(fi_api_key="...", fi_secret_key="...")

result = evaluator.evaluate(

eval_templates="tone",

inputs={"input": "Your text to evaluate."},

model_name="turing_small", # or turing_flash, turing_large, protect, protect_flash

)Next Steps

Evaluate via Platform & SDK

Run evals from the UI or SDK.

Create custom evals

Define your own eval rules and choose a model to run them.

Eval groups

Run multiple evals together as a group.

Use custom models

Bring your own model for evaluations.

CI/CD pipeline

Run evals automatically in your pipeline.

Evaluation overview

How evaluation fits into the platform.

Questions & Discussion