Evaluation Groups

Organize multiple evaluations into groups and run them together across datasets, simulations, and more.

About

Evaluation groups are named collections of eval templates that you run together as a single unit. Instead of adding evals one by one every time, you define the set once and apply it anywhere on the platform.

This keeps your quality checks consistent. Whether you are running evals on a dataset, testing a simulation, or comparing prompt versions, the same group applies the same criteria every time, with no manual reconfiguration needed.

When to use

- Full quality suite on a dataset: Run tone, safety, accuracy, and relevance checks together in one pass instead of configuring each eval separately.

- Standardized release checks: Apply the same eval group before every model or prompt update so every release is measured against the same bar.

- Consistent comparison across versions: Evaluate multiple prompt or model versions with the same group so results are directly comparable.

- Reusable test suites for simulations: Attach a group to a simulation run and test every scenario against your full criteria automatically.

How to

Follow the steps below to create and apply an eval group.

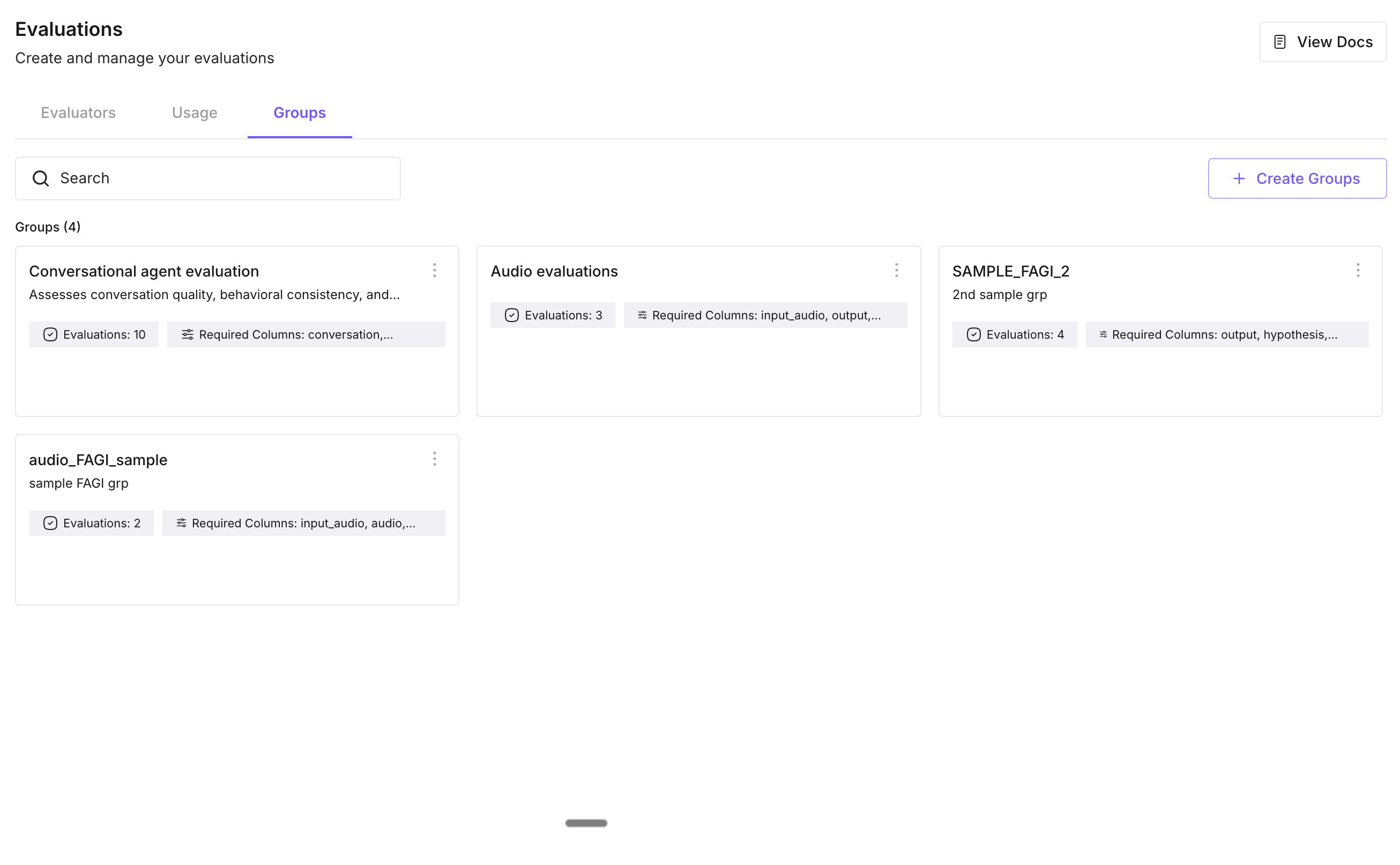

Open eval groups in Evaluation

Go to Evaluation in the platform and open Eval groups (or Groups). You’ll see your existing eval groups and an option to create a new one.

Create a new group

Click Create (or New group). The create-group flow opens.

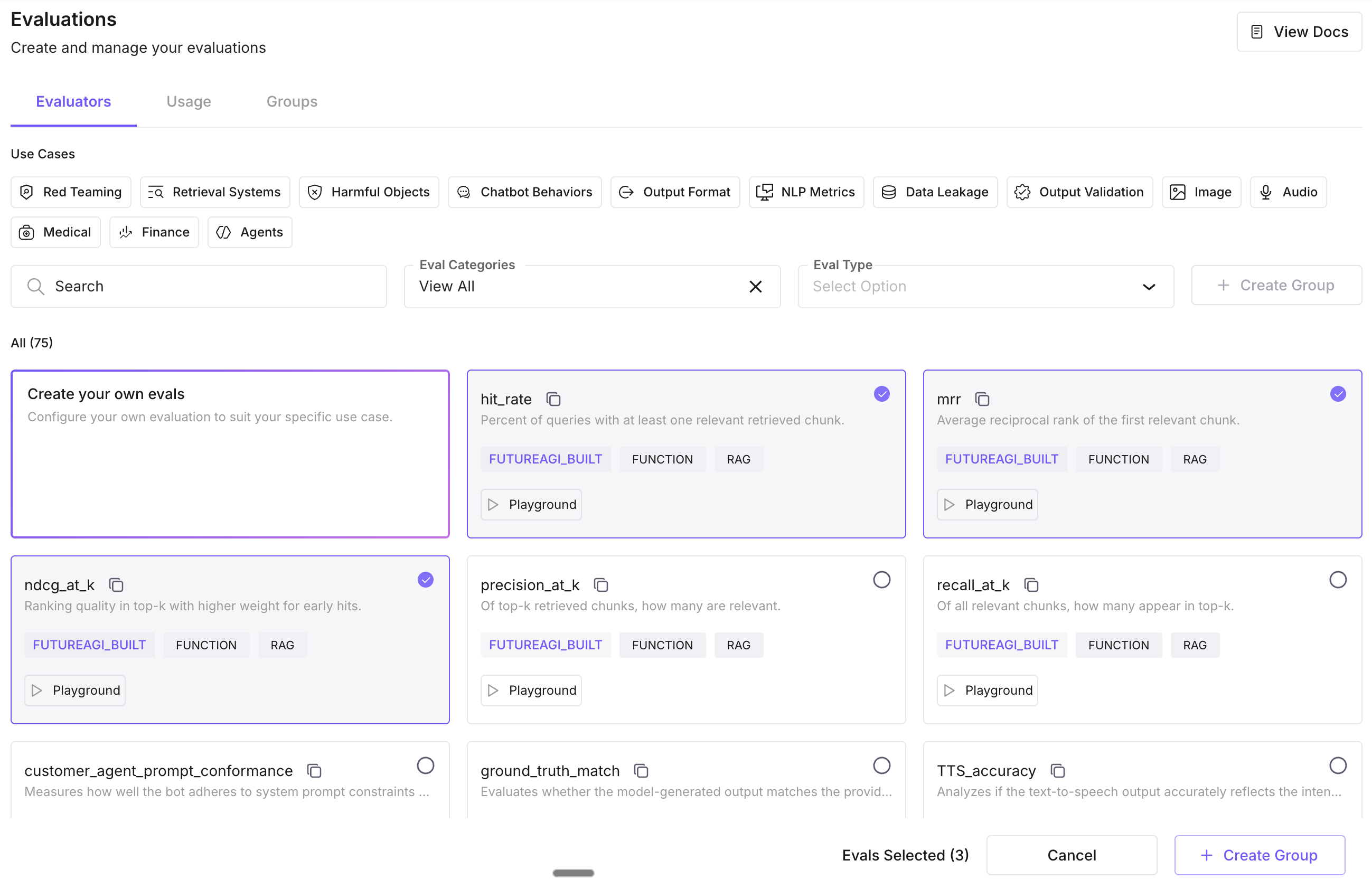

Select evals

Choose the eval templates to include in the group. You can add multiple templates and mix built-in and custom evals. Select all the evals you want to run together in this group.

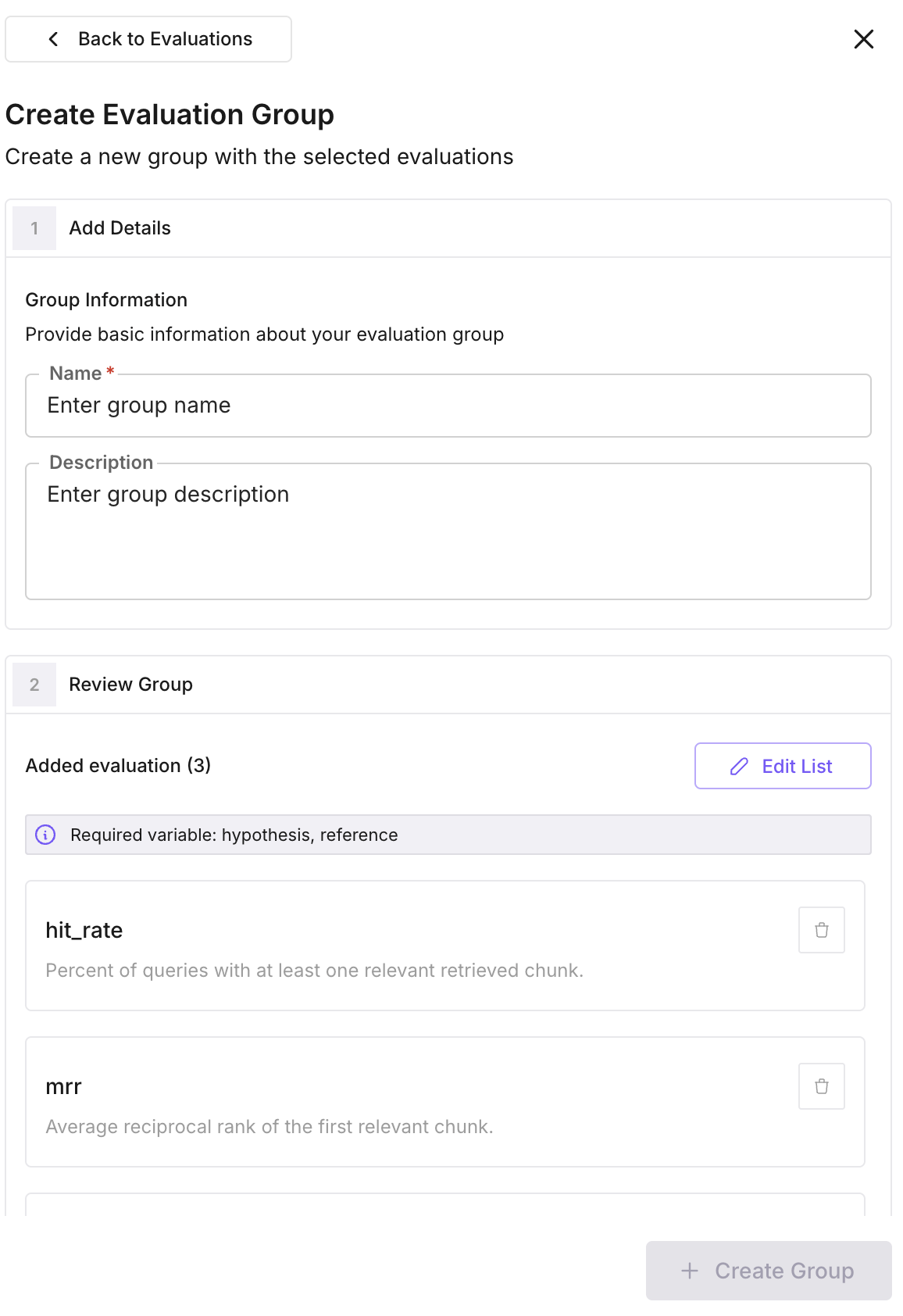

Add name and description, then save

Enter a name and optional description for the group, then save. The group appears in your eval groups list and is ready to apply.

Apply the group

Open the context where you want to run evals: a dataset, a run test in Simulate, an experiment, or a prompt in Prompt Workbench. Choose Apply eval group and select your group.

Configure and run

Set the column mapping so each eval knows which field to use as input and output. Optionally pick a model and enable error localization if needed. You can deselect specific evals from the group if you don’t want to run all of them. Click Apply and the platform runs all selected evals together.

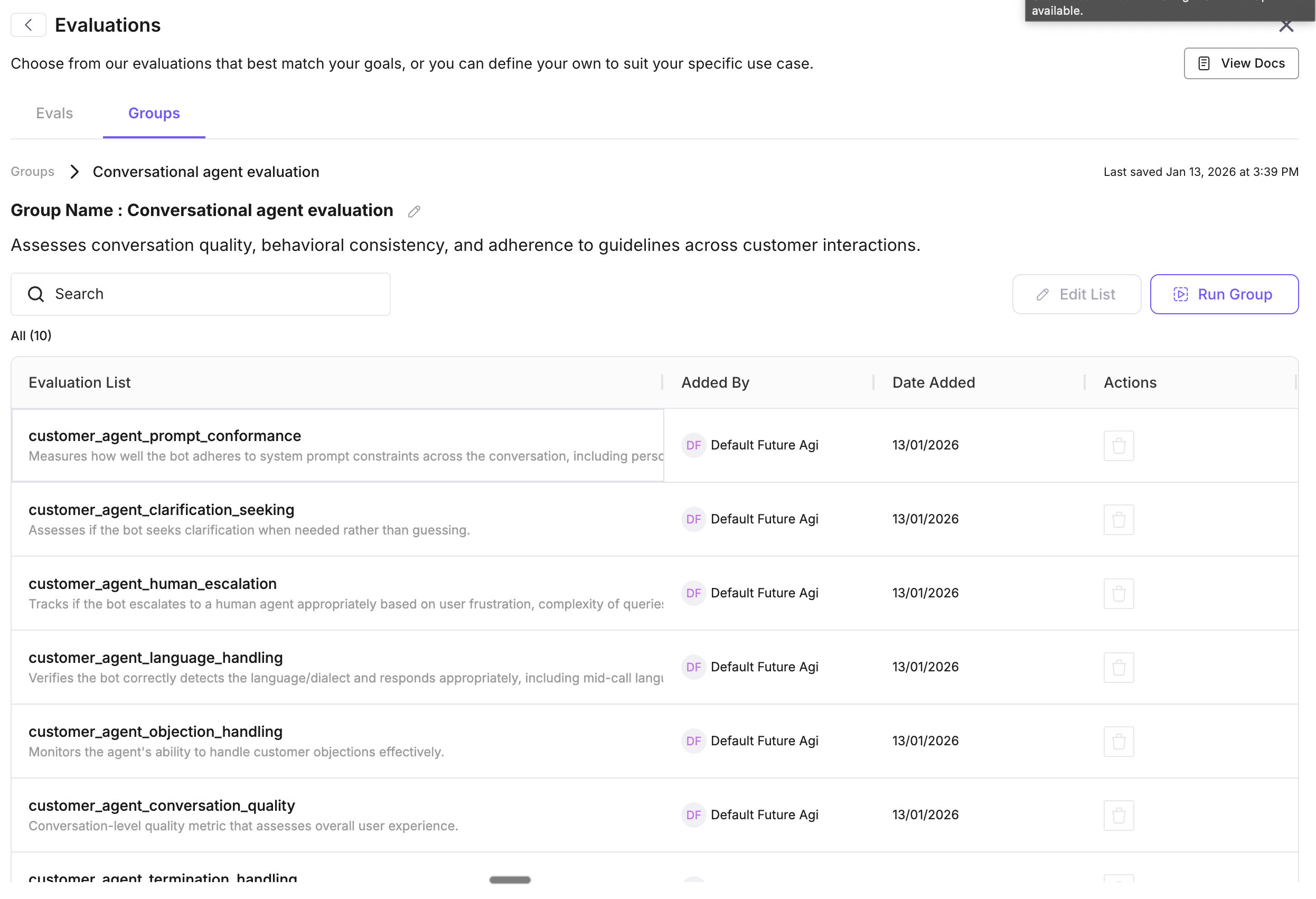

Edit or reuse the group

You can edit an existing group to add or remove eval templates. The same group can be reused on other datasets, run tests, or experiments. Just apply it again and adjust the mapping for that context.

Tip

Eval groups can be used across the platform: on datasets, in simulations, and on traces. See Evaluate via Platform & SDK to get started.

Next Steps

Evaluate via Platform & SDK

Run a single eval from the UI or SDK.

Create custom evals

Define your own eval rules and criteria.

Use custom models

Bring your own model for evaluations.

Future AGI models

Built-in models available for evals.

CI/CD pipeline

Run evals automatically in your pipeline.

Evaluation overview

How evaluation fits into the platform.