Evaluate Tool Calling: Agent Function Use in Simulations

Evaluate the tool-calling capabilities of your AI agent during Future AGI simulation runs. Test whether agents invoke the correct tools and parameters.

About

Tool call evaluation scores how well your agent uses tools during simulated conversations — checking whether it called the right tool, with the right arguments, at the right time. Enable it on a run test and after each conversation completes, the platform extracts every tool call and shows a Pass/Fail result with a reason alongside your other eval metrics.

Note

Your agent must be deployed with tool calling enabled to be evaluated. Enable tool call evaluation only for run tests where the agent under test actually uses tools.

When to use

- Check tool usage — Confirm the agent invokes the right tools (e.g. transfer, end call) when it should and with the right inputs.

- Catch misuse — See which tool calls failed evaluation (wrong tool, wrong arguments, or used at the wrong time).

- Compare across runs — After changing prompts or tool definitions, re-run and compare tool-eval results to spot regressions.

How to

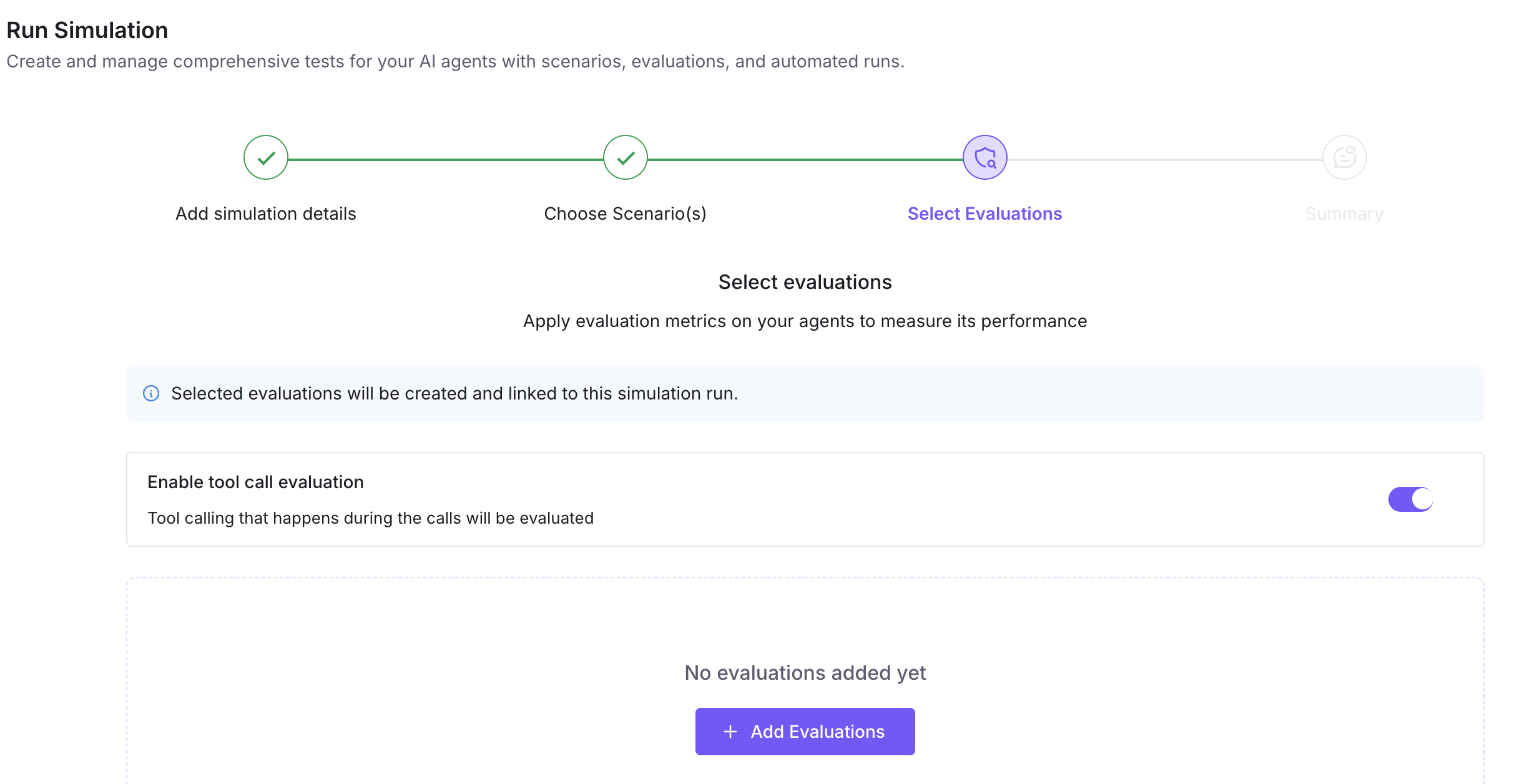

You enable tool call evaluation when creating or editing a run test (agent-based simulation). It’s a toggle in the Select Evaluations step; for voice agents the platform may prompt you to provide API Key and Assistant ID so it can access your provider’s call data and extract tool calls.

Create or open a simulation run and choose scenarios

Go to Simulate → Run Simulation. Click Create a Simulation (or open an existing run). In Add simulation details, enter a name and select Agent definition and Agent version (for voice + tool eval, a version is required so the platform can use its API Key and Assistant ID). In Choose Scenario(s), select one or more scenarios to run against. Click Next to go to Select Evaluations.

Turn on tool call evaluation

In Select Evaluations, turn on Enable tool call evaluation. The platform will then run tool-call evaluation after each conversation in the run. You can also add other evaluations (task completion, tone, etc.) in this step. Click Next to go to Summary.

Provide API Key and Assistant ID when prompted (voice only)

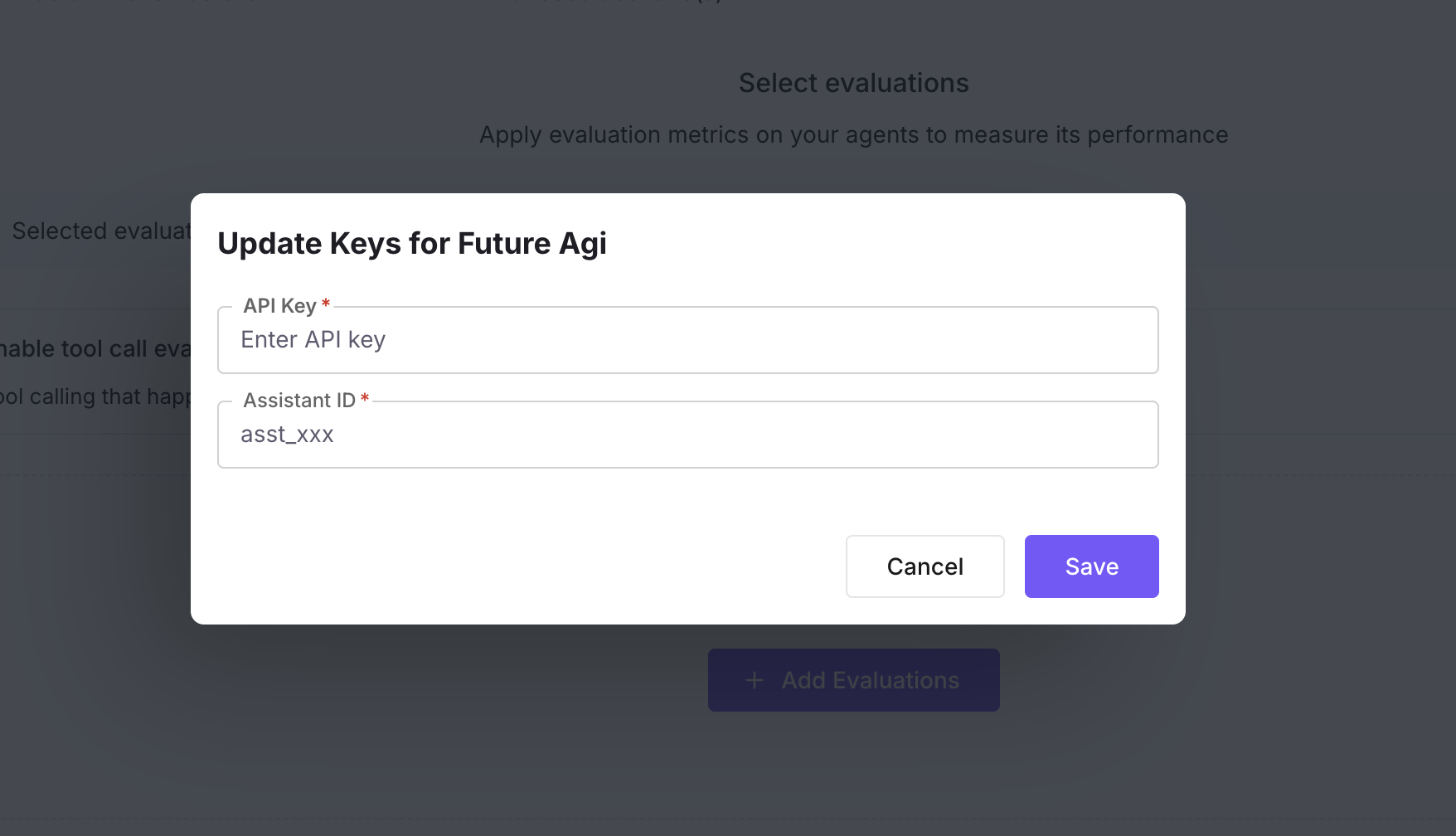

For voice agents, the platform needs your provider API Key and Assistant ID (for the agent you’re evaluating) to fetch call data and extract tool calls. If the selected agent version doesn’t have these set, you’ll be prompted (e.g. Update Keys for test) when you enable tool call evaluation or when you save. Enter the API key and assistant ID; they are stored on the agent version. For chat agents, tool calls come from conversation data, so no keys are required.

Complete Summary and run

In Summary, review the run test configuration, then create or save the run test. Open it and click Execute to start a test execution. When each conversation completes, the platform runs your evals and, if tool call evaluation is on, evaluates each tool call and attaches results to that call.

View tool evaluation results

Open the execution detail for the run. Tool call results appear with your other evaluation metrics—as columns or rows per tool call (e.g. “Transfer #1”, “End call #1”) with a result (Pass/Fail) and reason. Use them to see which tool calls passed or failed and why.

Notes

- Voice only: API Key and Assistant ID are required for voice run tests when tool call evaluation is enabled, so the platform can pull call data from your provider. For chat run tests, tool calls are taken from stored conversation data.

- Agent version: The keys are stored with the agent version you selected for the run test. If you switch to another version, you may need to update keys for that version if it uses a different assistant or provider.

- No tool calls: If a conversation has no tool calls, nothing is evaluated for that call; other evals still run as usual.

Next Steps

Questions & Discussion