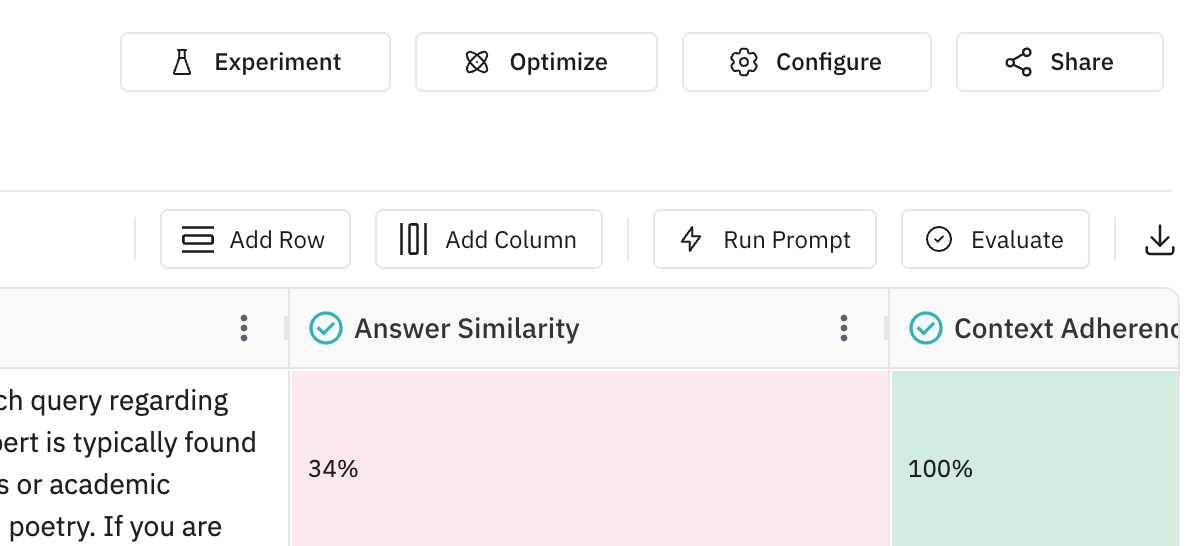

Run Prompt in a Dataset: Generate LLM Columns in Future AGI

Add a dynamic column to your dataset by running an LLM, TTS, STT, or image generation model on every row using a prompt with column placeholders.

About

Run Prompt lets you add a new column to your dataset that is filled by a model (LLM, Text-to-Speech, Speech-to-Text, or Image Generation). You define a prompt (messages with placeholders that pull from other columns), pick a model and settings, and the system runs the prompt on each row and writes the model output into that column. The result is a dynamic column of responses you can use for evals, comparison, or export.

When to use

- Generate answers or text: Use an LLM to answer questions, summarize, or complete text per row (e.g. a column of questions produces a column of answers).

- Produce audio: Use Text-to-Speech to turn a text column into an audio column (e.g. scripts to voice clips).

- Transcribe audio: Use Speech-to-Text to turn an audio column into a text column for evals or search.

- Batch test a prompt: Run the same prompt across many rows to see how the model behaves and then run evals on the outputs.

- Generate images: Use Image Generation to create images from text (or text + image) per row; the new column stores image URLs.

- Structured output: Use response format (e.g. JSON schema) to get structured fields (object, array) in the new column for downstream use.

How to

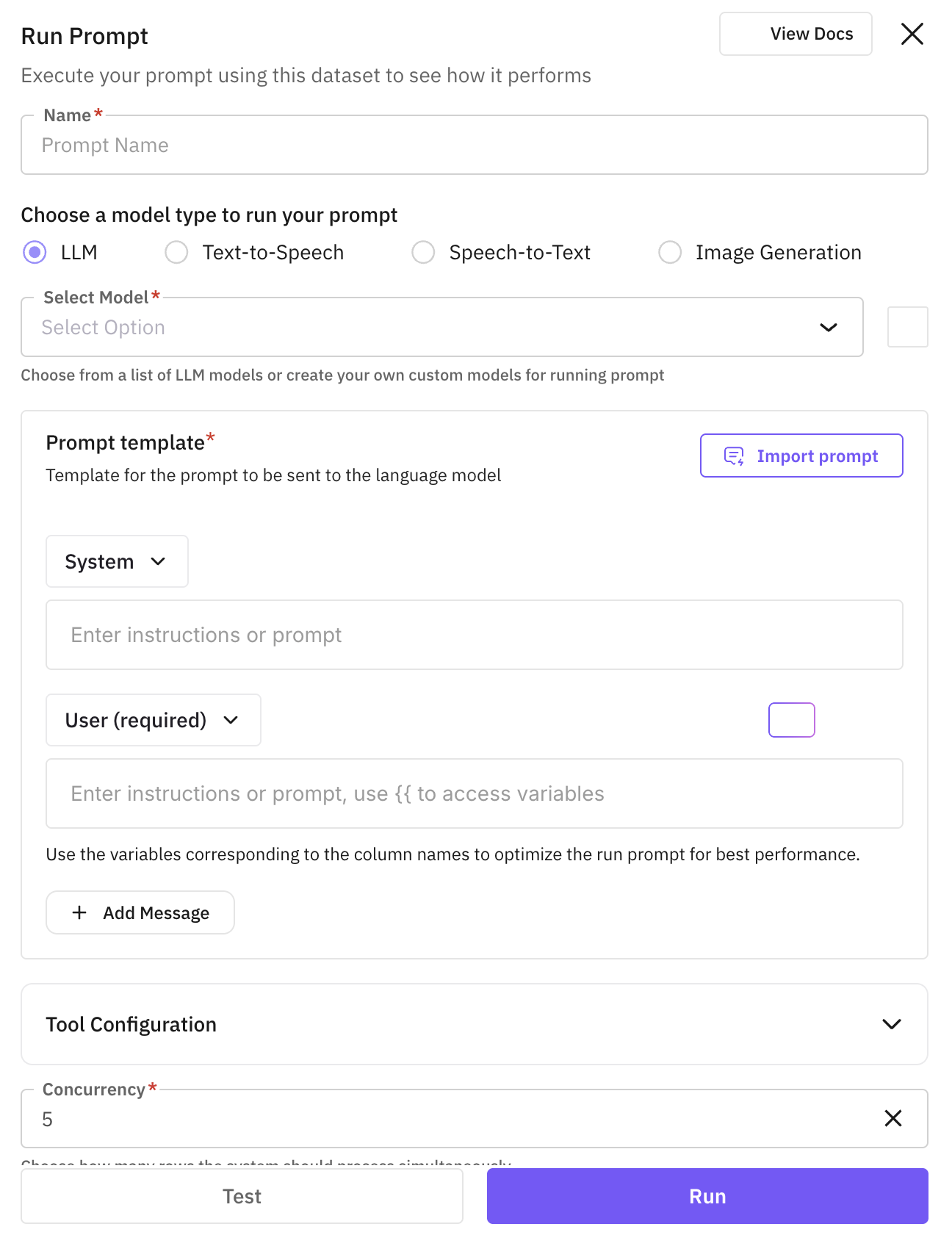

Navigate to Run Prompt

Click the “Run Prompt” button (e.g. in the top-right or dataset toolbar) to add a new run-prompt column. This creates a dynamic column that will store the model output for each row.

Assign prompt name

Enter a name for the prompt. This name is used as the new column name in your dataset. Each row will have one cell in this column holding the model response for that row.

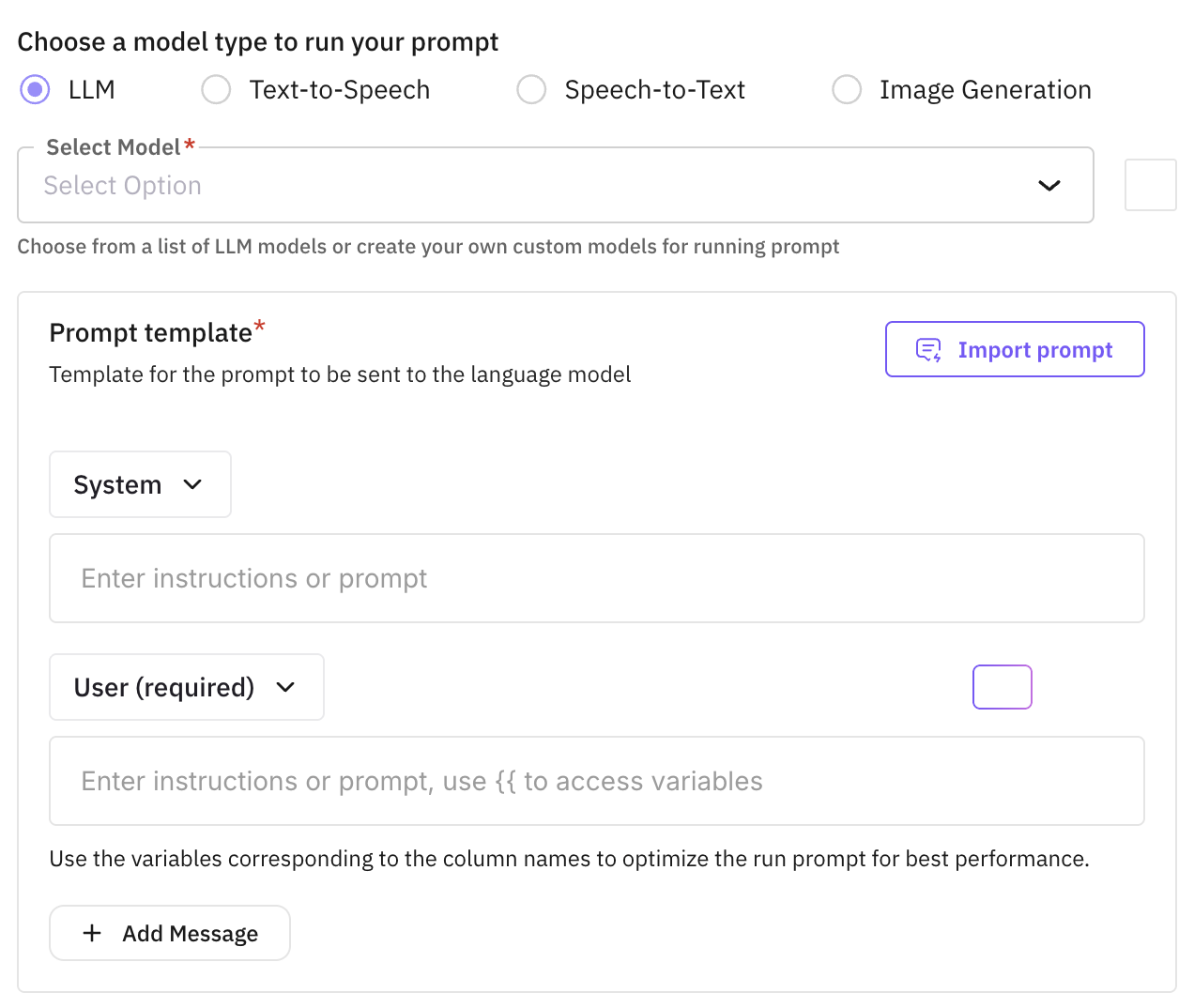

Choose model type and model

Select the type of task, then pick the model to use. Models are filtered by type; you need an API key (or custom model) for the chosen provider.

Choose LLM for text generation (chat). Use for Q&A, summarization, or any text-in, text-out task. Select a chat model from the list; ensure the provider has an API key configured.

Tip

Click here to learn how to create custom models.

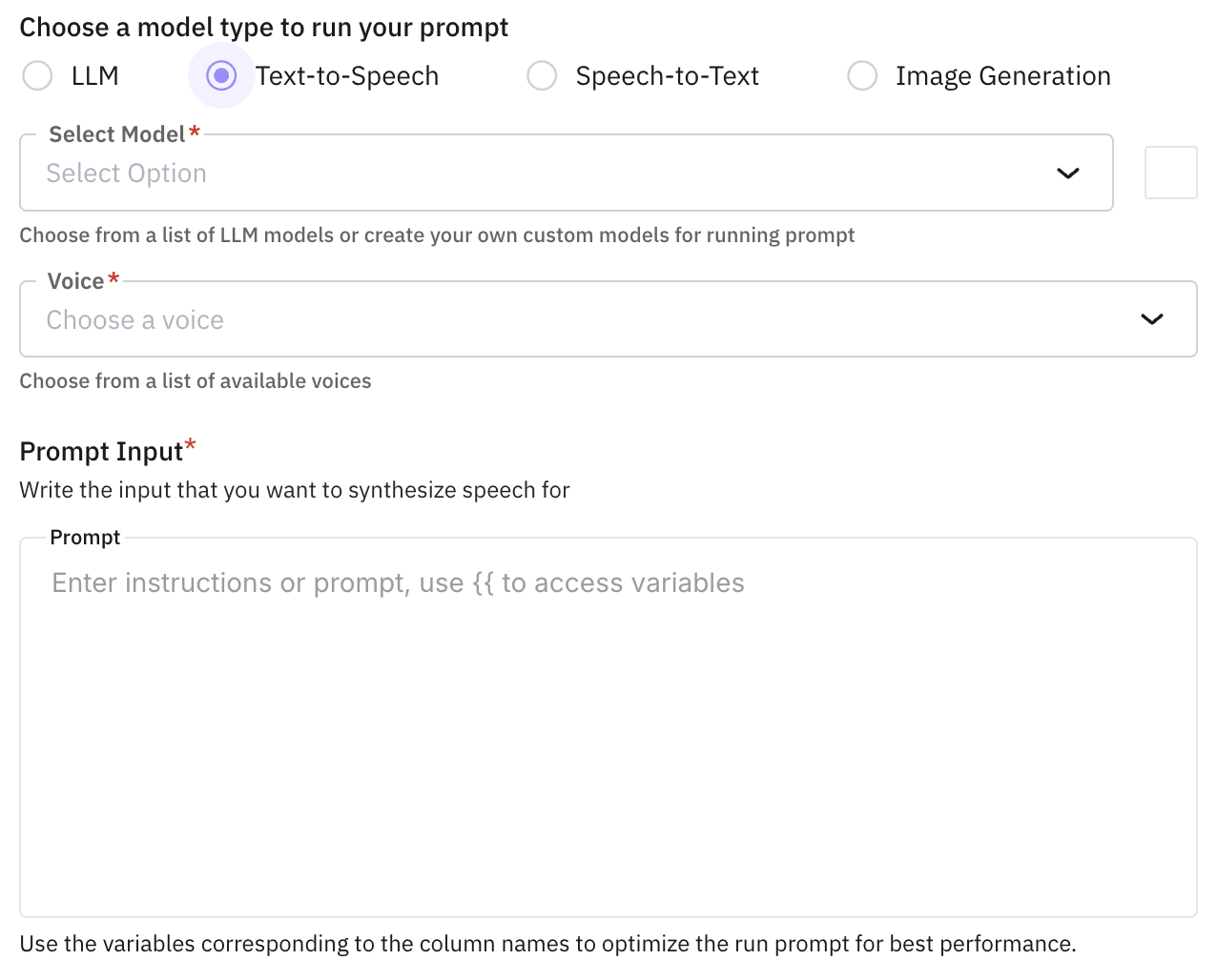

Choose Text-to-Speech to generate audio from text. The prompt output column will store audio (e.g. URLs). You can configure voice and format for supported TTS models.

Tip

Click here to learn how to create custom models.

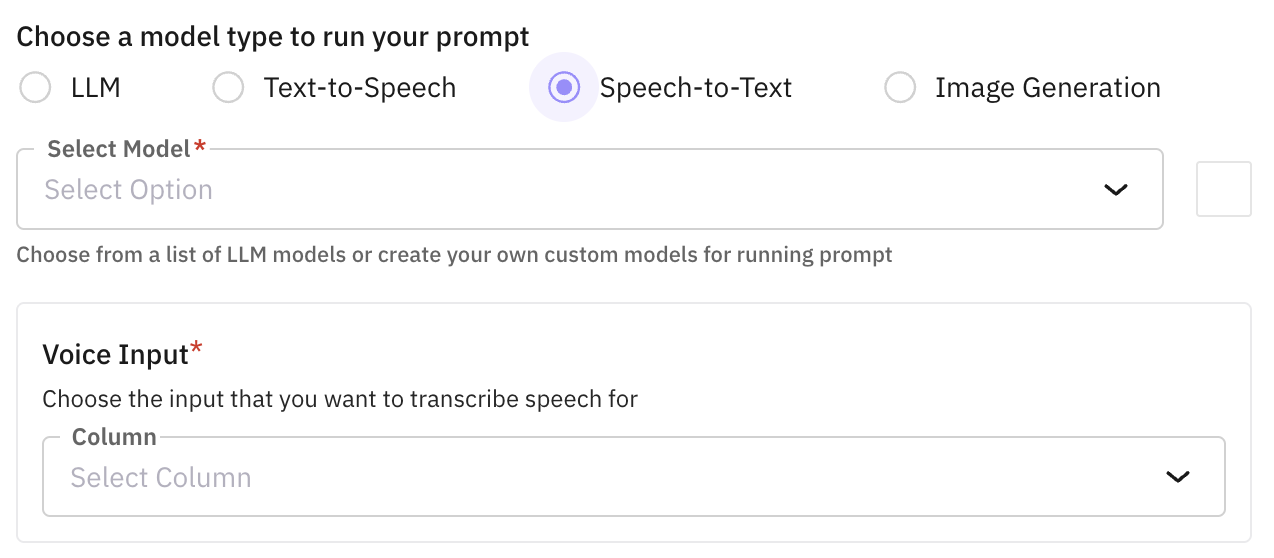

Choose Speech-to-Text to transcribe audio into text. Use when a column contains audio; the model output will be text in the new column.

Tip

Click here to learn how to create custom models.

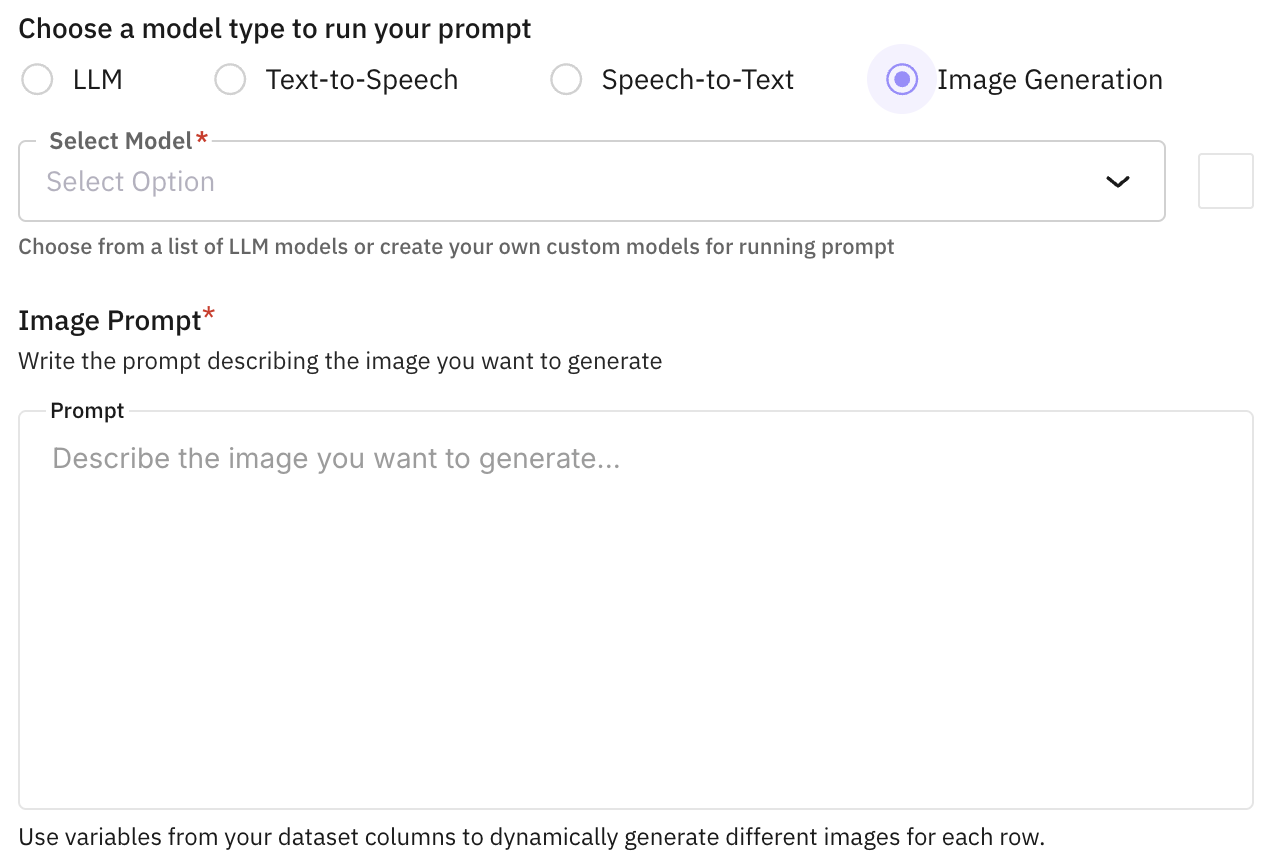

Choose Image Generation to create images from text (or image + text) prompts. The prompt output column will store image URLs. Select an image-generation model and ensure the provider has an API key configured.

Tip

Click here to learn how to create custom models.

Build the prompt

Define the prompt as a list of messages with roles:

- System (optional): Instructions that guide the model’s behavior and set context.

- User (required): The main input message. This role is required for the prompt to work.

Use {{column_name}} placeholders to pull values from other columns. At runtime, these are replaced by the cell value for each row.

Example:

System: You are a helpful assistant that summarizes content.

User: Please summarize the following text: {{article_text}}JSON dot notation: For JSON columns, access nested fields directly:

User: Based on this prompt: {{config.prompt}}, generate a response that addresses {{config.topic}}{{config.prompt}} accesses the prompt field within the config JSON column.

Configure Model Parameters (optional)

Adjust model parameters such as temperature, max tokens, top_p, and other settings to fine-tune the model’s behavior according to your needs.

Configure Tools (optional)

Add tools or functions that the model can use during execution. This enables the model to perform specific actions or access external capabilities.

Configure Concurrency

Set the concurrency level to control how many prompt executions run in parallel. Higher concurrency speeds up processing but may consume more resources.

Run Prompt

Click the “Run” button to execute the prompt across your dataset. The responses will be generated and saved as a new dynamic column in your dataset.

Next Steps

Add Rows to Dataset

Add individual records or bulk import data rows to your dataset

Add Columns to Dataset

Extend your dataset structure with additional data fields

Experiments

Design and run controlled experiments to compare approaches

Annotate Dataset

Add metadata and annotations to enrich your dataset

Create New Dataset

Create another dataset using SDK, file upload, or synthetic generation

Questions & Discussion