Building Golden Datasets from Production Traces with Falcon AI

Turn production traces into a curated, ground-truthed golden dataset in one Falcon AI conversation.

| Time | Difficulty | Package |

|---|---|---|

| 15 min | Intermediate | fi-instrumentation-otel |

By the end of this cookbook you will have a balanced, ground-truthed regression dataset built from your own production traces, with an exact-match eval scoring agent predictions against expected categories.

- FutureAGI account → app.futureagi.com

- API keys:

FI_API_KEYandFI_SECRET_KEY(see Get your API keys) - A traced project with traces of varied quality. If you don’t have one, instrument any agent with the

Add tracingstep below.

Install

Install the FutureAGI instrumentation SDK and set your API keys.

pip install fi-instrumentation-otel traceai-openai openaiexport FI_API_KEY="your-fi-api-key"

export FI_SECRET_KEY="your-fi-secret-key"

export OPENAI_API_KEY="your-openai-key"What is Falcon AI?

Falcon AI is the AI assistant built into the FutureAGI dashboard. Open it from the sidebar and it picks up whatever page you’re viewing as context, so questions are answered against the trace, project, or dataset you’re already on.

It runs skills: slash commands that execute a structured workflow over the current context and produce a clickable artifact (a dataset, an eval run, a prompt diff). The five steps below add tracing to a classifier, then drive a single Falcon AI conversation that turns those traces into a curated, ground-truthed regression dataset with an exact-match eval.

Add tracing to your agent

Falcon AI does its work by reading your agent’s traces: a trace is the structured record of one request, broken into spans for each LLM call, tool invocation, or sub-step inside it. The agent has to be sending traces to FutureAGI before any of the next steps can run.

Three lines below set that up. OpenAIInstrumentor patches the OpenAI SDK so every API call is captured automatically. The @tracer.agent decorator on your agent’s entry point makes each classification appear as one parent span Falcon AI can filter on.

from fi_instrumentation import register, FITracer

from fi_instrumentation.fi_types import ProjectType

from traceai_openai import OpenAIInstrumentor

trace_provider = register(

project_type=ProjectType.OBSERVE,

project_name="email-triage-prod",

)

OpenAIInstrumentor().instrument(tracer_provider=trace_provider)

tracer = FITracer(trace_provider.get_tracer("email-triage-prod"))from openai import OpenAI

client = OpenAI()

# Replace this with your own agent's entry point.

# The @tracer.agent decorator makes each call show up as one parent span

# in your FutureAGI Tracing project, with the OpenAI calls nested underneath.

@tracer.agent(name="my_agent")

def my_agent(email_text: str) -> str:

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": "Classify this email into one of: urgent, billing, technical, general, spam. Reply with just the category name."},

{"role": "user", "content": email_text},

],

)

return response.choices[0].message.content

# A classifier with a thin prompt will misclassify ambiguous emails (hostile tone

# over a small issue, multi-issue emails, etc.). Run a varied batch so Falcon AI

# has both clean classifications and likely misclassifications in the next step.

print(my_agent("Production is down. Payment processing has been failing for 30 minutes."))

print(my_agent("WORST SERVICE EVER. I have been on hold for 2 hours. CALL ME BACK."))

print(my_agent("I have a billing question and also my login is not working since yesterday."))

print(my_agent("Why am I being charged $499 when I signed up for the $49 plan? Please fix this or I am canceling."))

trace_provider.force_flush()Once traces are flowing, move on. For broader instrumentation patterns see Manual Tracing.

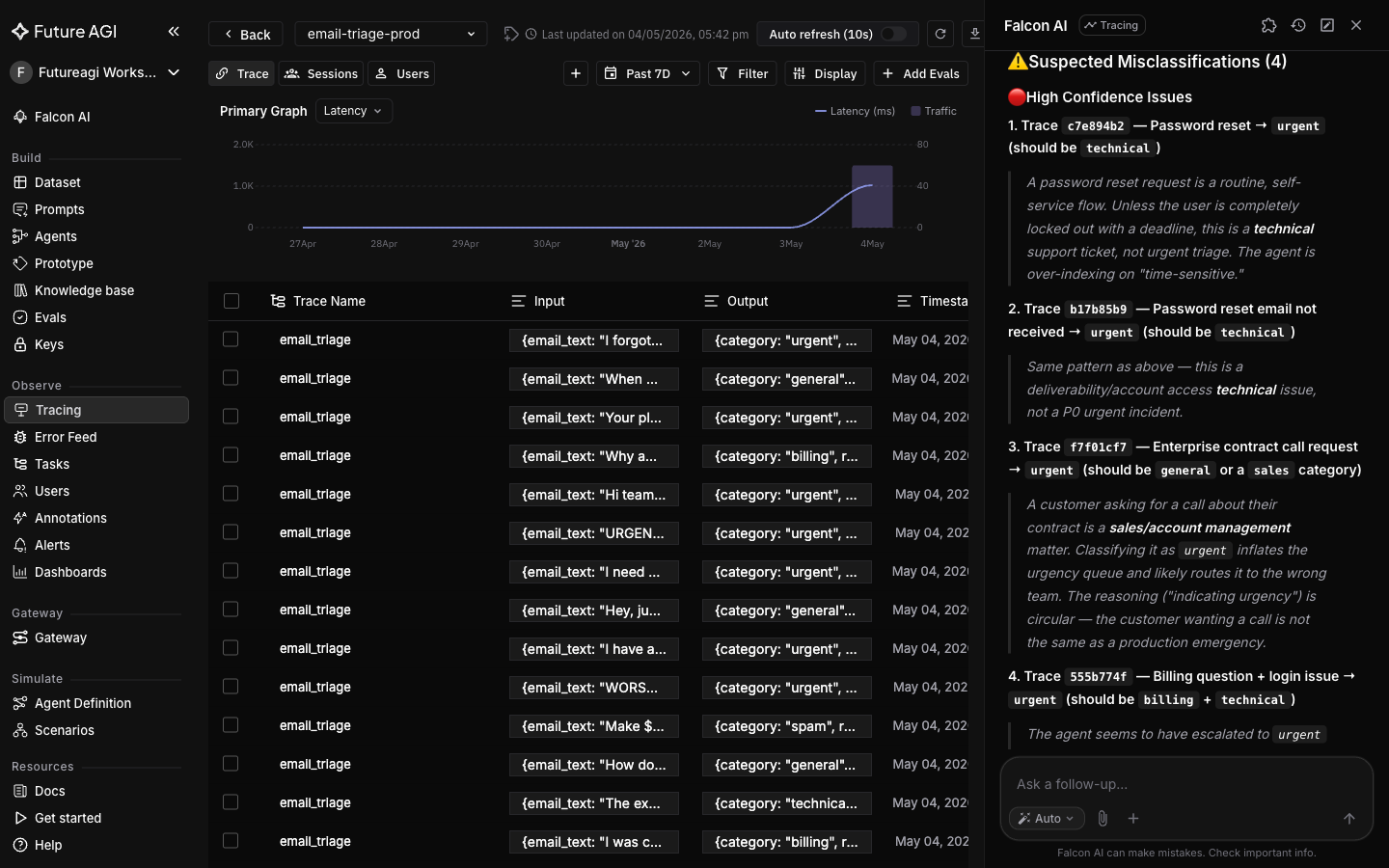

Explore failures with Falcon AI

Falcon AI picks up whatever page you’re viewing as context. So when you open the sidebar from your project’s Tracing page, every question is scoped to the traces in that project automatically.

Open the Falcon AI sidebar on the project. The context chip should show the project. Type:

What categories did my agent assign across these traces, and which ones look like misclassifications?

Tip

Cmd+K (Mac) or Ctrl+K (Windows) opens Falcon AI from anywhere in the dashboard, with the current page auto-attached as a context chip.

Falcon AI returns a category histogram and flags traces where the category looks off given the email content (your wording and counts will vary).

These flagged misclassifications are a strong starting point, not ground truth. You’ll confirm them in a later step.

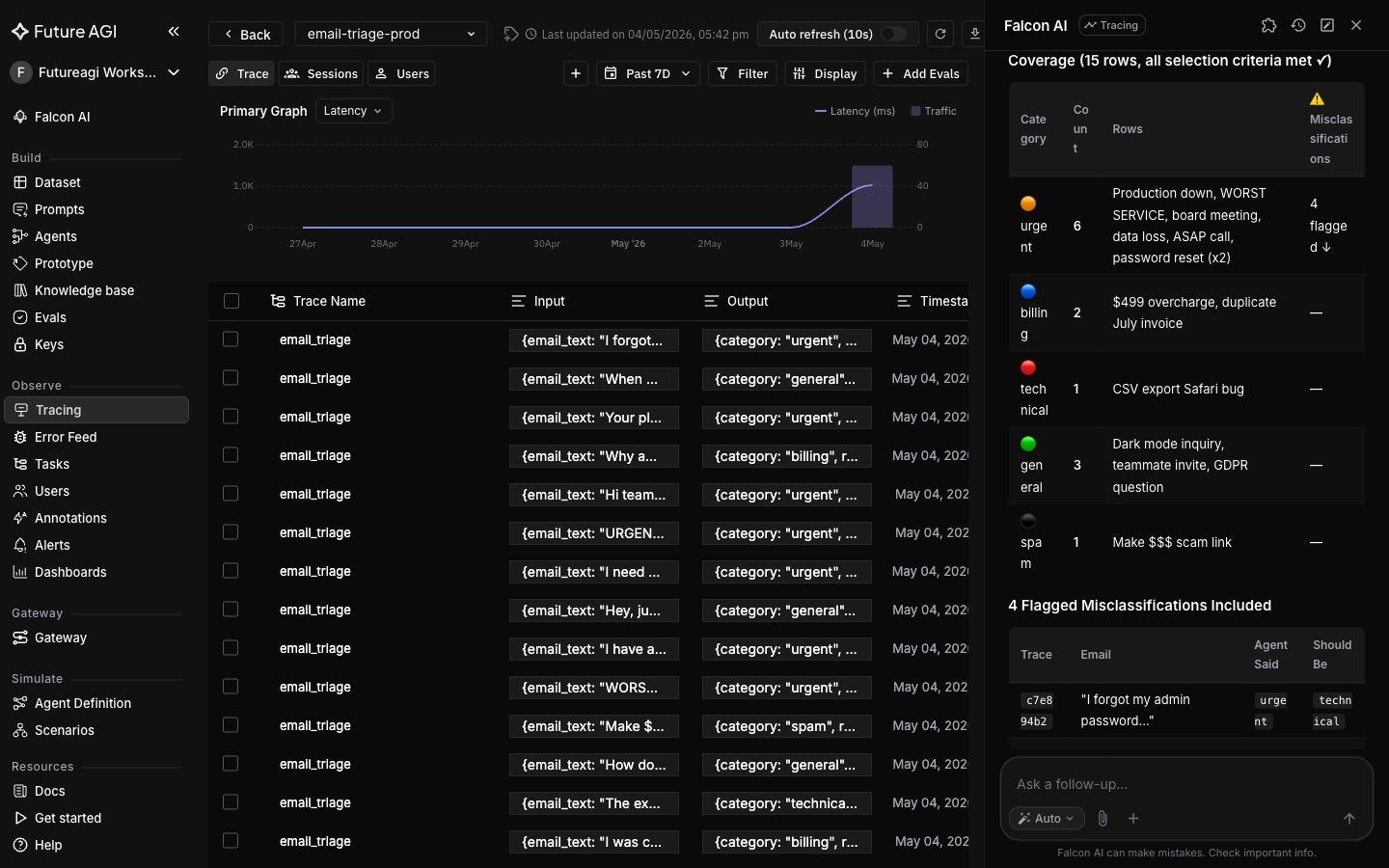

Build the dataset with curation criteria

/build-dataset reads the traces in context and writes the matching rows to a new dataset. The skill follows whatever selection criteria you give it, so the prompt below bakes in a coverage rule that mixes easy-pass rows with the misclassifications from the previous turn.

/build-dataset

Build a dataset called

email-triage-eval-v1. Pull rows from the traces in this project. Selection criteria: include at least 2 traces from each category (urgent, billing, technical, general, spam) plus the likely misclassifications you flagged in the previous turn. Total target: 12-15 rows. Columns:

email_text(text) - the user messagepredicted_category(text) - what the agent chosetrace_id(text) - so we can trace any failure back

Falcon AI orchestrates the underlying dataset tools (such as create_dataset, add_columns, add_dataset_rows) against the traces in context and returns a completion card with a link to the new dataset.

A dataset that is 90% successes won’t catch regressions; one that is 90% failures won’t catch false positives. The “at least 2 from each category plus the misclassifications” rule gives both classes meaningful coverage.

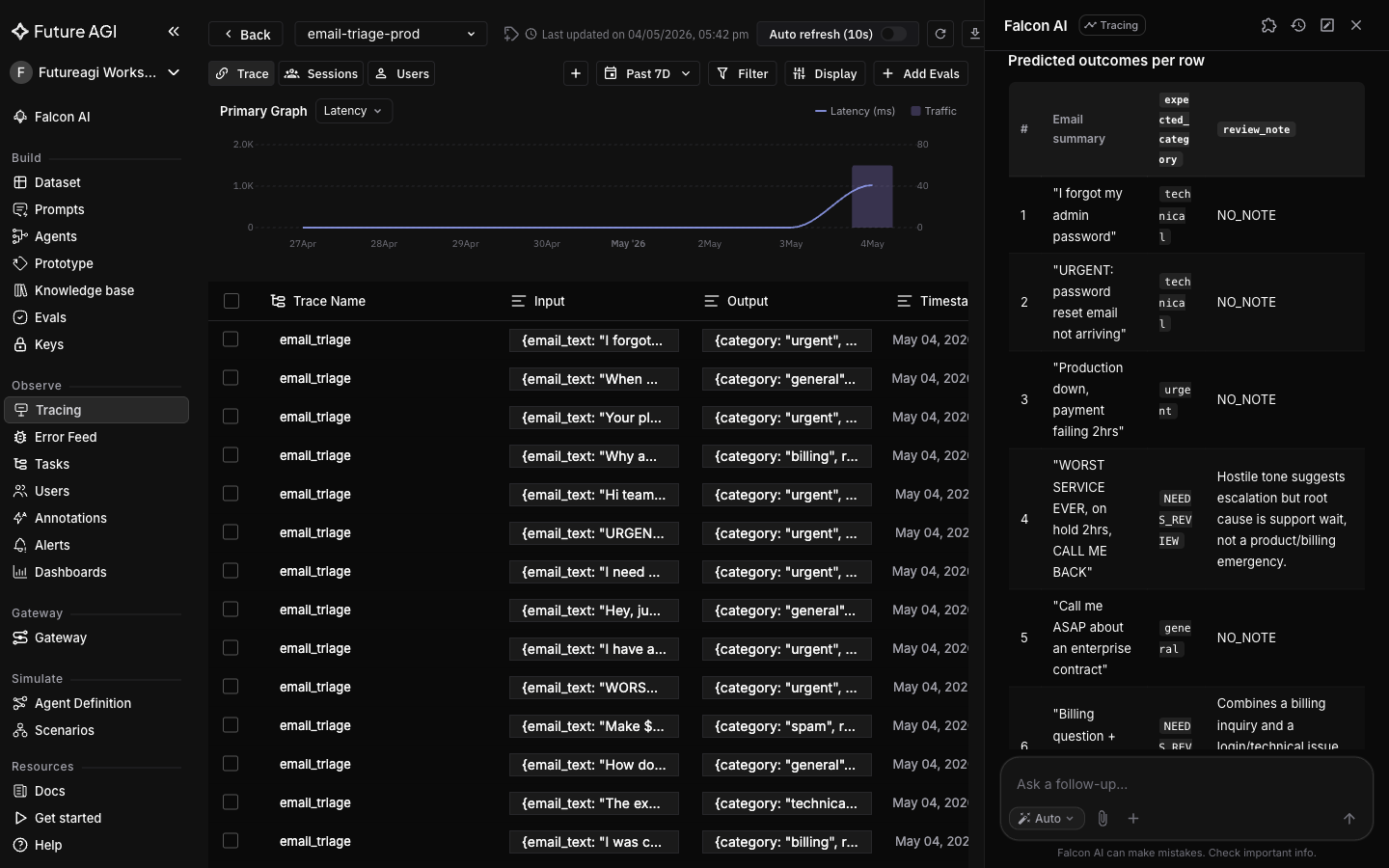

Add a ground truth column

predicted_category is what the agent chose. To turn the dataset into an eval, you need expected_category, what the agent should have chosen. For genuinely ambiguous rows (hostile tone over a small issue, multi-issue emails) there is no single correct answer, so we use a NEEDS_REVIEW value plus a review_note column to surface them for human judgment instead of poisoning the eval with arbitrary labels.

Add a column

expected_category(text) toemail-triage-eval-v1. For each row, propose the correct category based on the email text. For rows where the correct category is genuinely ambiguous (e.g., hostile tone over a small issue, multi-issue emails), use the valueNEEDS_REVIEWand add a one-sentence note in a new columnreview_note(text) explaining why.

Falcon AI populates both columns per row. Expect a split between confident expected_category values and a few rows tagged NEEDS_REVIEW.

Open the dataset in Datasets → email-triage-eval-v1, click each NEEDS_REVIEW row, and decide based on your team’s routing rules. Edit the rows in the UI or ask Falcon AI to update them.

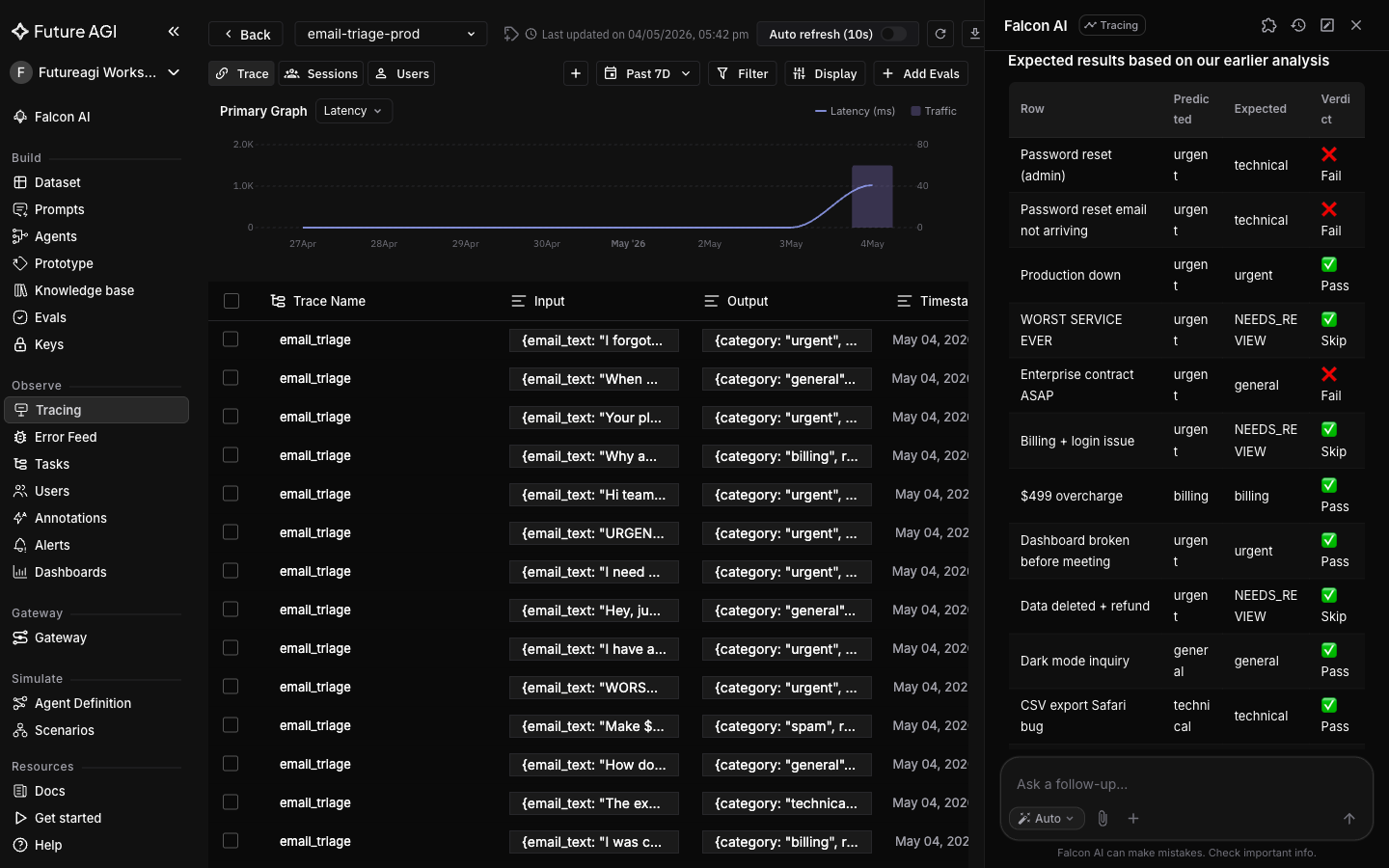

Lock in a baseline eval

/run-evaluations runs an eval template from your workspace’s catalog against every row in the dataset and returns a card with per-row and aggregate scores. Describe the goal in plain English so Falcon AI picks the right template (here, an exact-match check between two text columns).

Run an evaluation on

email-triage-eval-v1that checks whetherpredicted_categoryexactly matchesexpected_categoryfor each row. Use the eval template from this workspace that best fits a string-equality check between two columns.

Both the pass pattern and the fail pattern are what you want. A regression test where every row passes is not testing anything; one where every row fails is just noisy. The dataset now has compounding value: any future prompt change can be re-scored against it in one chat message.

What you solved

The email triage classifier now has a balanced regression dataset built from its own production traces, with predicted vs expected category labels and an exact-match eval. Any future prompt change re-scores against the same rows in one chat message, so regressions and false positives both surface immediately.

Production traces, curated and ground-truthed in one Falcon AI conversation, become a reusable golden dataset that catches both regressions and false positives.

- Imbalanced golden datasets (90% successes or 90% failures): solved by curation criteria baked into the

/build-datasetprompt - Missing ground truth labels: solved by adding

expected_categoryto every row in one chat message - Genuinely ambiguous rows poisoning the eval: surfaced via the

NEEDS_REVIEWvalue andreview_notecolumn instead of being labeled by guesswork - Eval scoring by hand: replaced by

/run-evaluationsagainst the dataset, with results saved as a clickable completion card

Explore further

Questions & Discussion