Debug LLM Traces From Your IDE Using Natural Language MCP Queries

Connect Future AGI's MCP server to Cursor, Claude Code, or VS Code, then debug failing traces, run evals, and annotate spans without leaving your editor.

Add the Future AGI MCP server to your IDE with one config line, sign in via OAuth, and ask your AI assistant questions like “what went wrong with the last failing trace in my support-bot project?” It pulls span data, runs error analysis, and proposes fixes, all in the same chat where you’re writing code.

| Time | Difficulty |

|---|---|

| 10 min | Beginner |

- FutureAGI account → app.futureagi.com

- A traced project with at least a few traces. If you don’t have one, follow Manual Tracing to instrument an agent first.

- An MCP-capable IDE: Cursor, Claude Code, VS Code (with the MCP extension), Claude Desktop, or Windsurf

Tutorial

Connect Future AGI's MCP server to your IDE

The MCP server lives at https://api.futureagi.com/mcp and uses OAuth. No API keys to copy around.

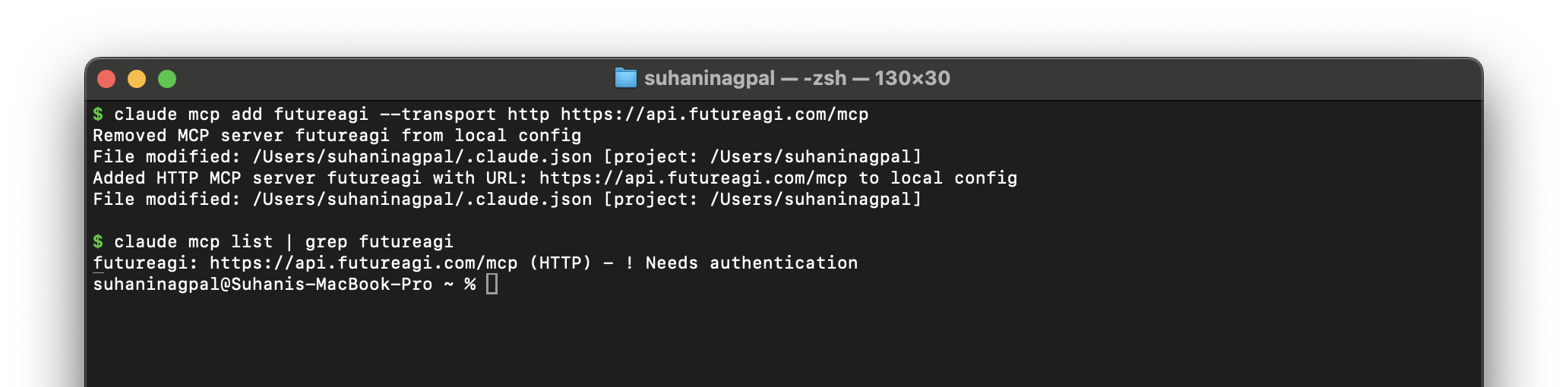

claude mcp add futureagi --transport http https://api.futureagi.com/mcpAfter running, claude mcp list should show futureagi with ! Needs authentication. That’s expected; the OAuth handshake happens in the next step.

Add to ~/.cursor/mcp.json:

{

"mcpServers": {

"futureagi": {

"url": "https://api.futureagi.com/mcp"

}

}

}Or use the one-click install link on the setup page.

Add to .vscode/settings.json:

{

"mcp.servers": {

"futureagi": {

"type": "http",

"url": "https://api.futureagi.com/mcp"

}

}

}Add to claude_desktop_config.json:

{

"mcpServers": {

"futureagi": {

"url": "https://api.futureagi.com/mcp"

}

}

}Add to ~/.codeium/windsurf/mcp_config.json:

{

"mcpServers": {

"futureagi": {

"serverUrl": "https://api.futureagi.com/mcp"

}

}

}Restart your IDE after editing the config.

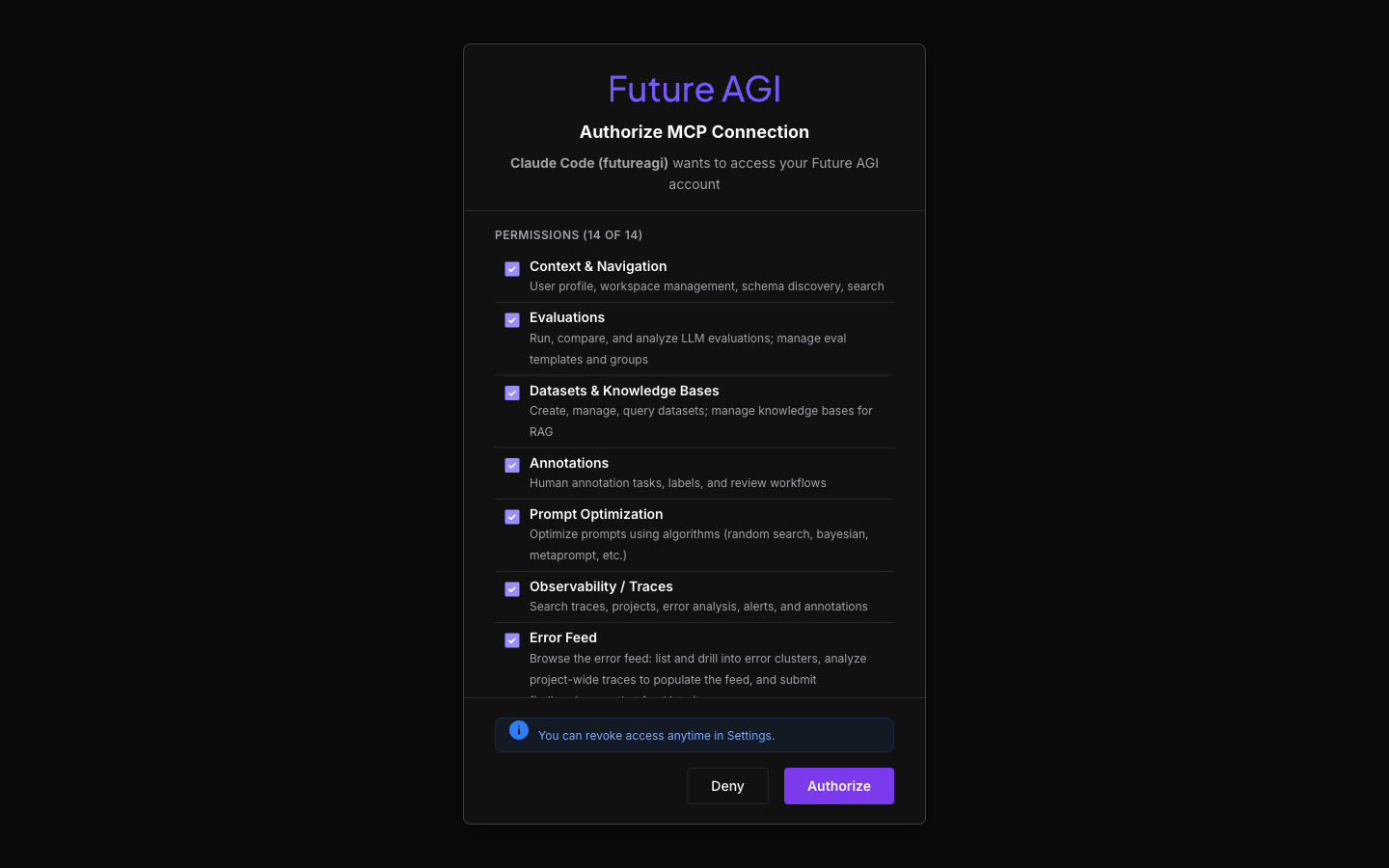

Authorize via OAuth

The first MCP tool call opens a browser to the consent screen. Review the 14 permission groups, click Authorize. Token cached, done.

Tip

If the browser doesn’t open, ask your assistant “list my Future AGI projects” to trigger the handshake. You can revoke access anytime in Settings → MCP in the dashboard.

Ask natural-language questions about your traces

Open your IDE’s chat panel and ask. The MCP server exposes ~50 trace-related tools (search, error analysis, span trees, error clusters), so phrase questions naturally. Your assistant picks the right tools.

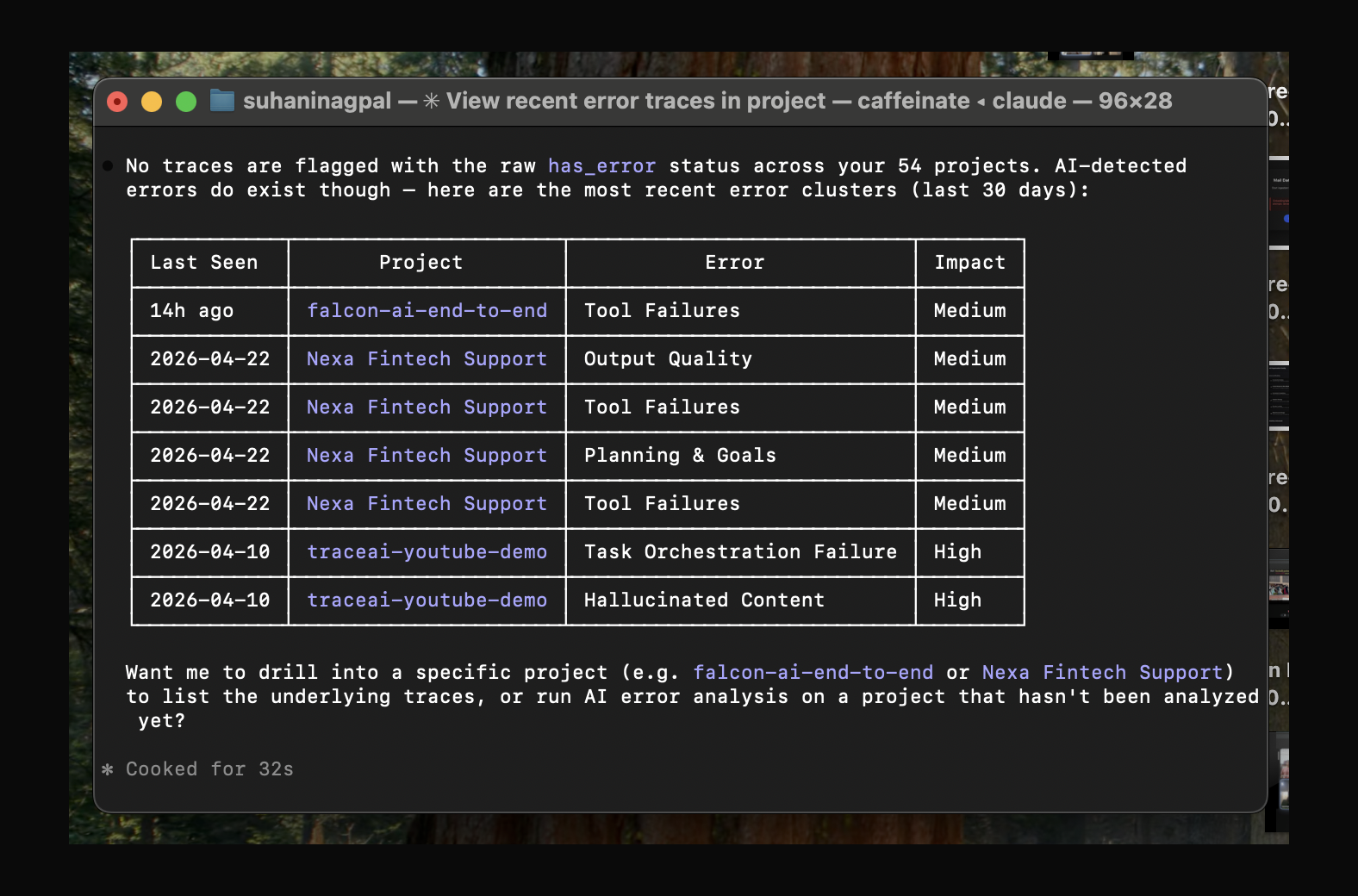

Find failing traces:

List the most recent traces in my project that have errors.

Calls search_traces with has_error=True. If no traces have raw error flags, your assistant pivots to list_error_clusters and surfaces the AI-detected error categories across your projects. A richer signal than HTTP errors alone.

Inspect a specific trace:

Show me the span tree for the second trace from the previous list.

Calls get_span_tree. Returns the parent span plus nested LLM/tool calls with timing and inputs.

Diagnose what went wrong:

Run error analysis on that trace.

Calls get_trace_error_analysis. Returns categorized findings (hallucination, wrong intent, tool misuse) with severity and a quality scorecard.

Look across the project:

Analyze all traces in my project from the last hour and group failures by category.

Calls analyze_project_traces and list_error_clusters. Returns a histogram with the dominant error types.

Score or annotate from chat:

Add the tag

needs-policy-groundingto the failing traces, and annotate them with “fabricated specifics, needs RAG over policy docs.”

Calls add_trace_tags + create_trace_annotation per matching trace. The annotations show up in the dashboard immediately.

Iterate without leaving the IDE

The same chat that read the trace can now read your code. Ask:

Based on the error analysis, draft a system-prompt patch that refuses to answer policy questions when no grounding tool is available. Show it as a diff against agent.py.

Your assistant has both the trace findings (from MCP) and the file (from your editor). It produces a paste-ready diff. Apply it, re-run a few queries through the agent, and ask the next turn:

Re-check the latest traces in my project and confirm the fabrication category dropped.

That’s the full loop. Failure detection, diagnosis, fix, verification, all driven from one IDE chat thread.

You connected Future AGI’s MCP server to your IDE, asked natural-language questions about your trace data, and ran an end-to-end debug loop without copying trace IDs or switching to the dashboard.

Explore further

Questions & Discussion